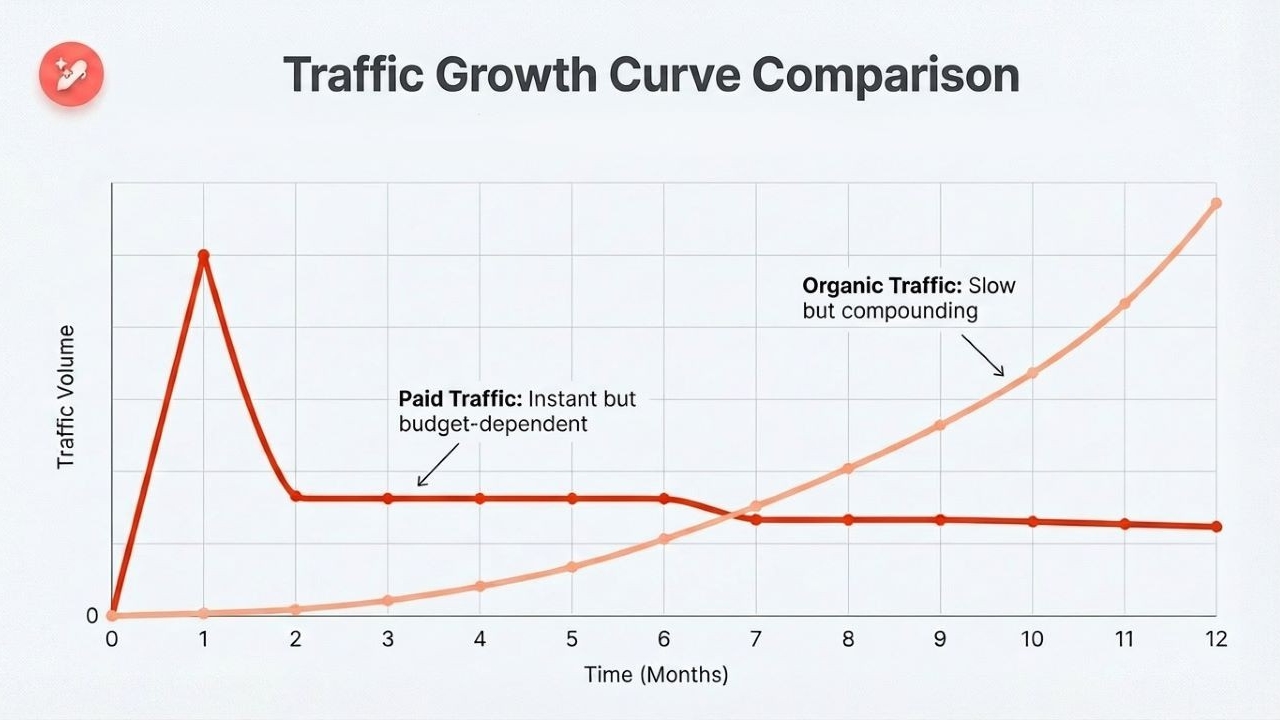

You publish a new article. You wait. Nothing moves.

So you publish another one. Then another. But your traffic graph still looks like a flatline, and your best content is sitting on page two with nothing to show for the effort you put into it.

Here is the uncomfortable truth most SEO guides will not open with: the problem is rarely that you need more content. In the majority of cases, the real culprit is not doing a proper SEO audit that could help you uncover and fix issues that halt your growth.

This guide walks you through how to do a complete SEO audit, step by step. We cover every layer: technical SEO, on-page optimization, content quality, backlinks, local SEO, and the GEO checks needed in 2026.

So, let’s get into it, shall we?

What is an SEO audit?

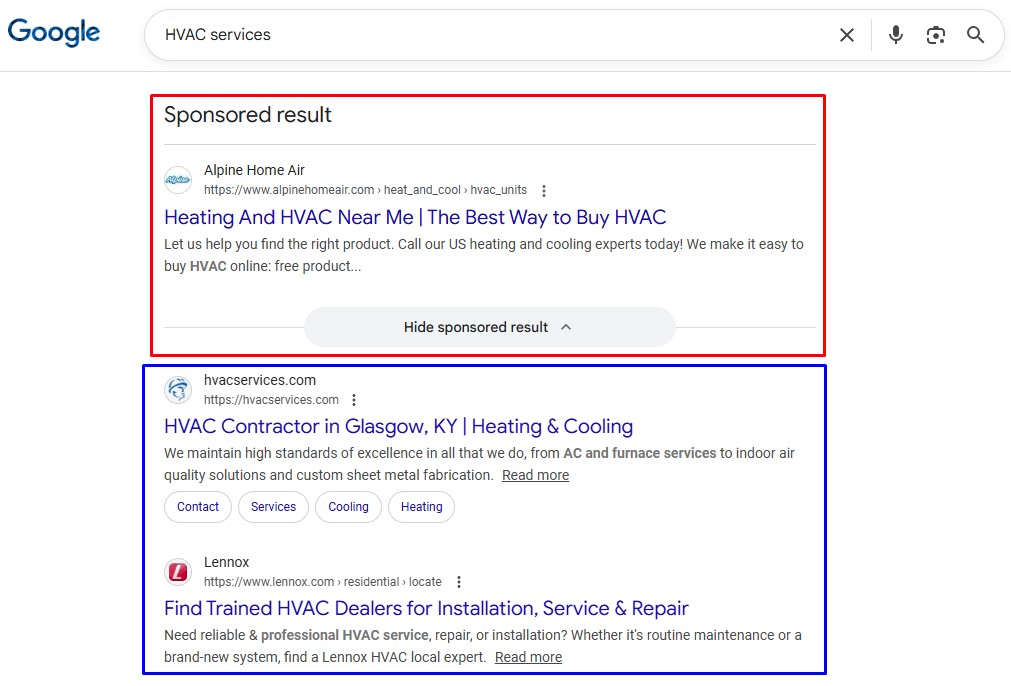

An SEO audit is a structured evaluation of your website’s ability to appear in search results. That includes traditional search engines like Google or Bing. SEO audits are also meant to increase visibility in key SERP feature snippets, including AI Overview.

A thorough SEO audit answers four core questions:

- Can search engines access and properly understand every important page?

- Is your content relevant, thorough, and genuinely useful for the queries you are targeting?

- Does your site carry enough authority to compete for those queries?

- Is your content structured so AI-powered engines can retrieve and cite it?

If you can honestly answer yes to all four, you are in better shape than most. The majority of sites cannot, and so they need a series of checks to help them get the desired rankings.

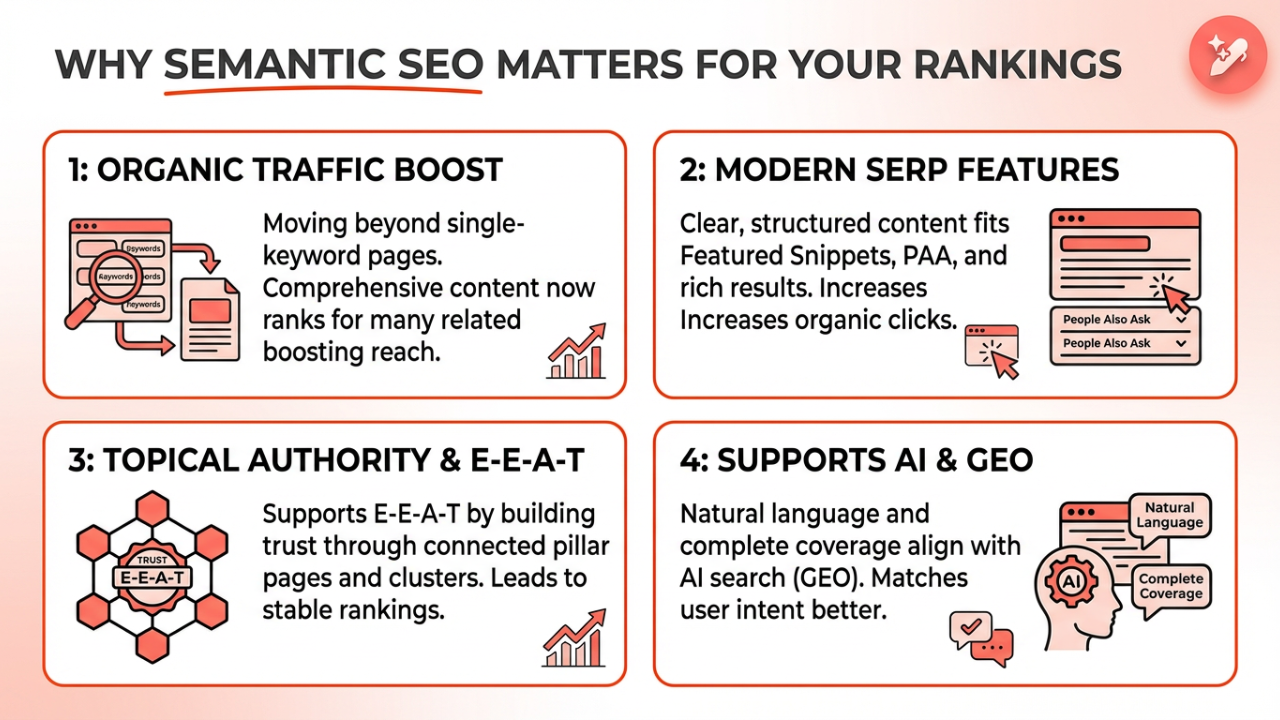

Why an SEO audit matters even more in 2026

Search engines have changed quickly in the past few years. Google’s December 2025 core update put heavy weight on the ‘Experience’ pillar of E-E-A-T, rewarding content with genuine first-hand evidence and penalizing generic, thinly differentiated pages.

Sites that passed through this update had one thing in common: they had recently audited, updated, and deepened their content rather than just adding more of it.

This is why regular SEO audits are so crucial. They help you identify technical issues and thin pages early so that you can safeguard your site from Google penalties and keep the audience engaged for better CTR and ranking.

The 5 types of SEO audits you need to know

Before diving into the steps, understand what an audit actually covers. There are five distinct layers, each targeting a different dimension of your site’s health.

- Technical SEO audit: Can search engines crawl, render, and index your site correctly?

- On-Page SEO audit: Are your pages properly optimized for the right queries?

- Content audit: Is your content current, thorough, and aligned with how people search today?

- Off-Page SEO / backlink audit: Is your link profile helping or quietly working against you?

- Local SEO audit: If you serve a specific geography, is your local presence set up completely?

Now, let’s quickly learn about the tools you’ll need for the five types of SEO audits.

Tools you’ll need for SEO audits

Here is the practical toolkit you’ll require to complete all types of audits for your site to refresh, regain, and maintain rankings.

Free tools:

- Google Search Console: Your most important audit tool. Indexing status, click data, Core Web Vitals, and manual actions. Non-negotiable.

- Google PageSpeed Insights: Core Web Vitals and performance data for any URL.

- Screaming Frog SEO Spider: Free up to 500 URLs. Crawls your site the way Googlebot does to inform you about broken links, non-indexed pages, and more.

- Google’s Rich Results Test: Validates your structured data (schema) for pages.

- Bing’s Mobile Friendliness Test: Quick mobile usability check.

- Google Analytics 4: Traffic trends and engagement data.

Paid tools:

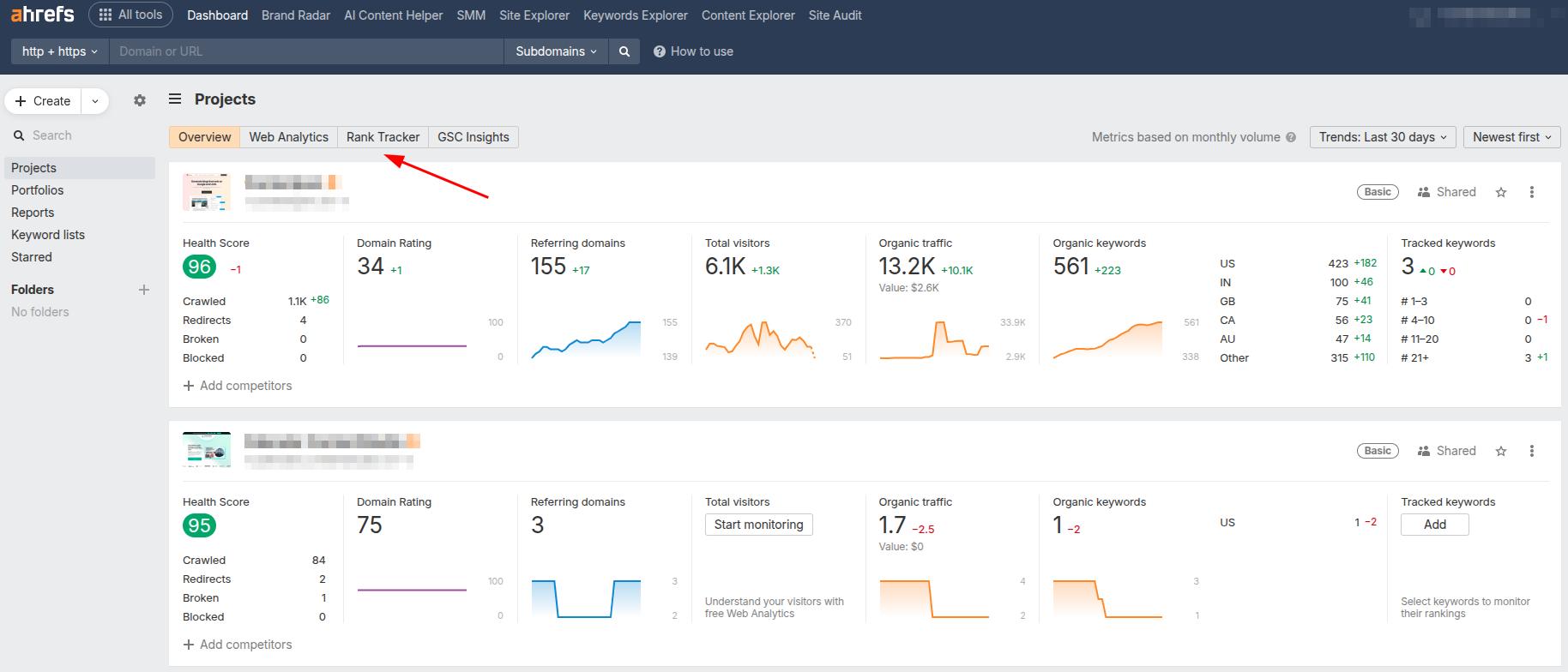

- Semrush or Ahrefs: Backlink analysis, keyword gap analysis, and large-scale site crawling.

- Screaming Frog paid version: Removes the 500-URL cap for larger platforms.

You can also use any other SEO tool you are comfortable with to complete the audit process.

Performing an SEO audit in 16 steps

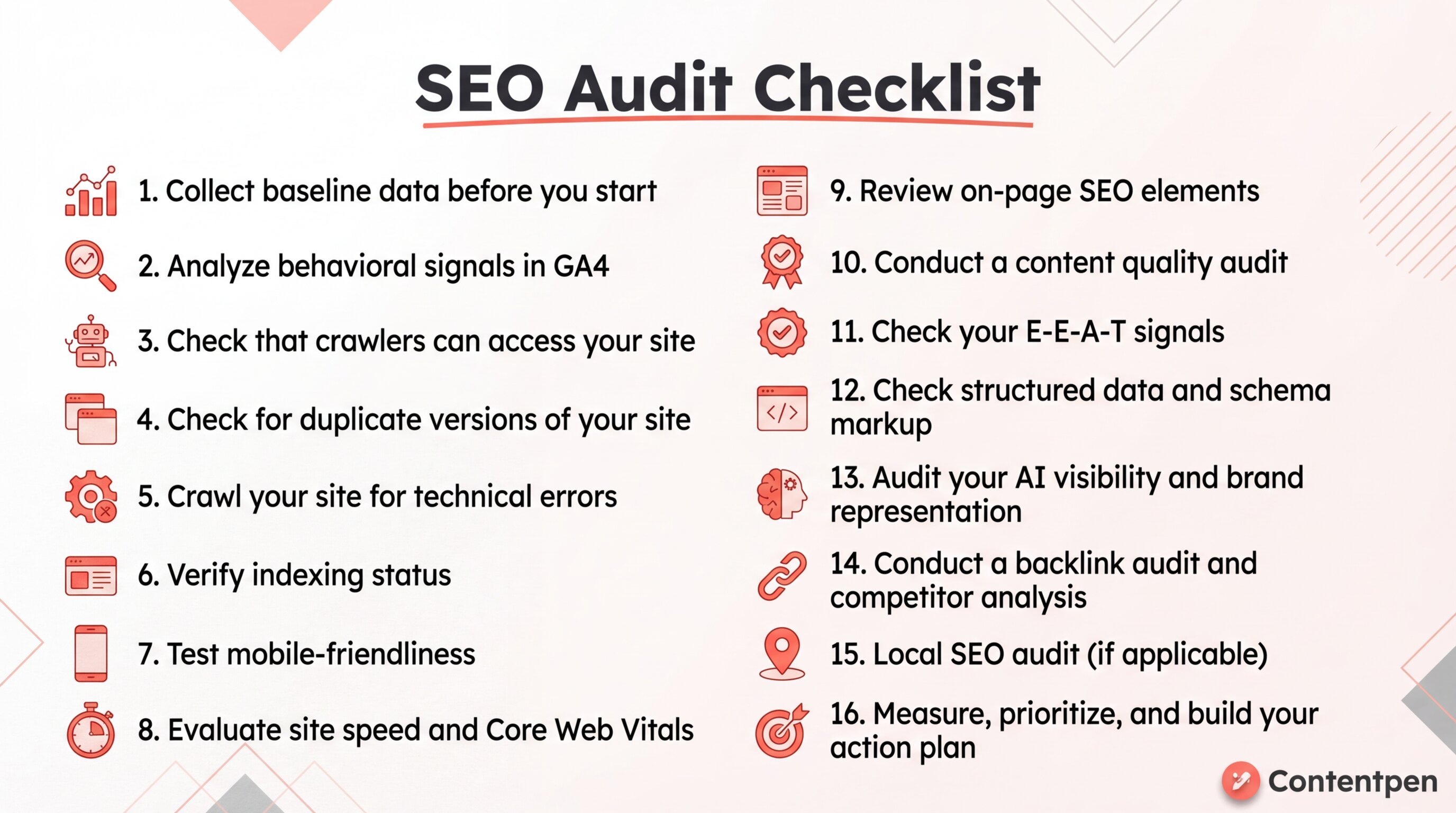

Here’s a quick SEO audit checklist you can scan before diving into the steps:

Use it as a quick reference, then follow the detailed steps below to implement each fix properly.

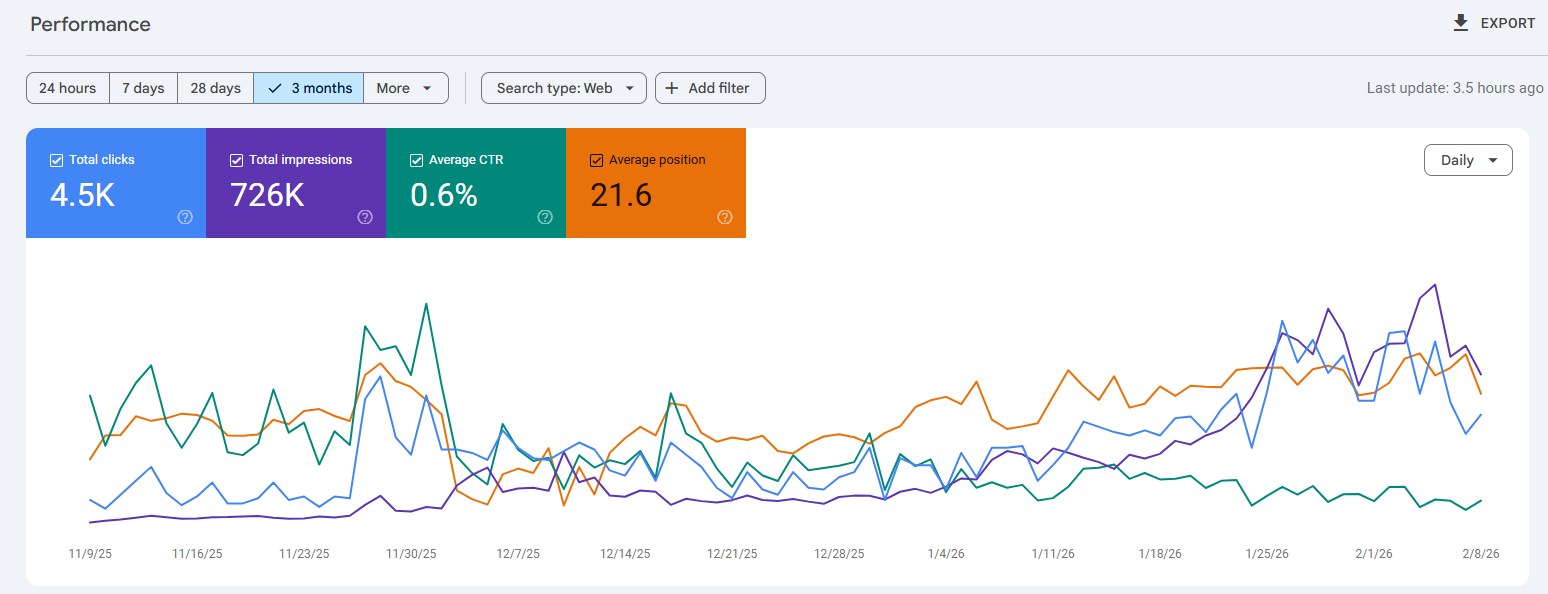

Step 1: Collect baseline data before you start

Before touching a single setting, establish where you are right now. This baseline makes the audit actionable rather than just descriptive. You need something to measure your improvements against.

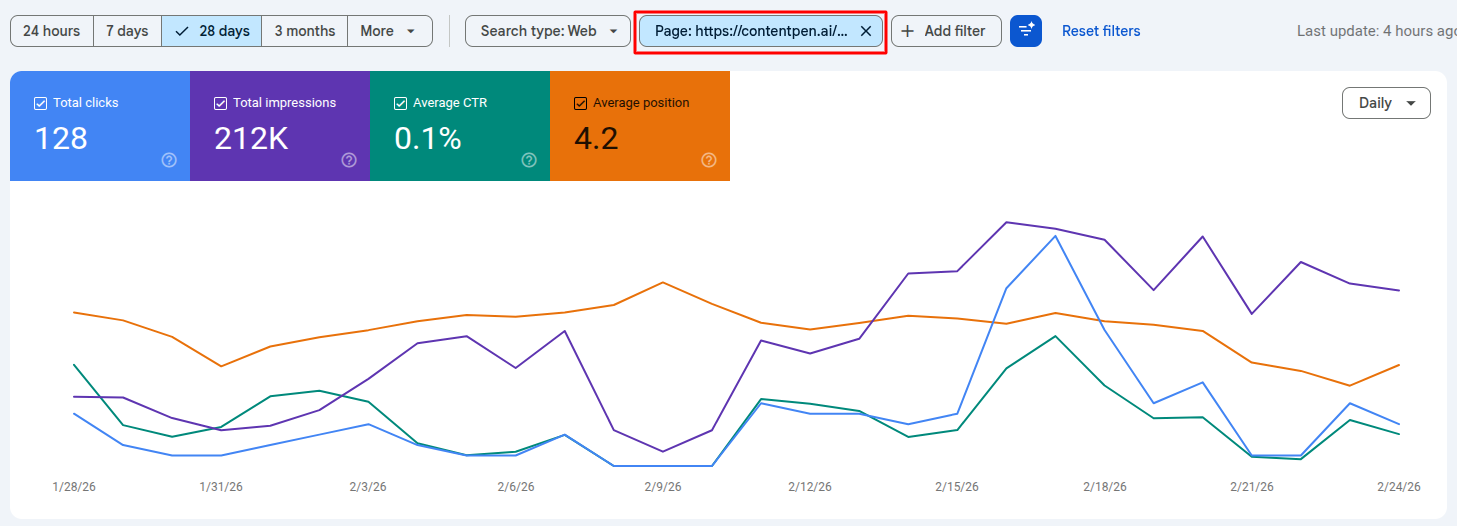

Open Google Search Console and record:

- Total organic traffic (last 90 days, by clicking the ‘More’ button.)

- Your top 10 pages by organic clicks.

- Average click-through rate across the site.

- Current keyword rankings for your five most important target terms.

- Core Web Vitals (pass/fail) status report.

Screenshot or export these numbers. Every fix you implement later should be traceable back to movement in one of these baseline metrics.

Step 2: Analyze behavioral signals in GA4

You recorded the numbers in Step 1. The second step is about reading what those numbers are actually telling you in Google Analytics 4.

GA4’s behavioral data reveals how real users are experiencing your site right now, which tells you something different from what Search Console shows. Search Console tells you how Google sees your pages. GA4 tells you what happens after someone clicks.

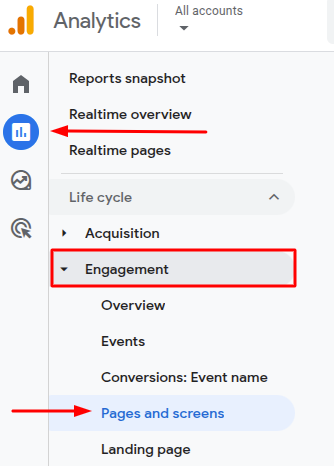

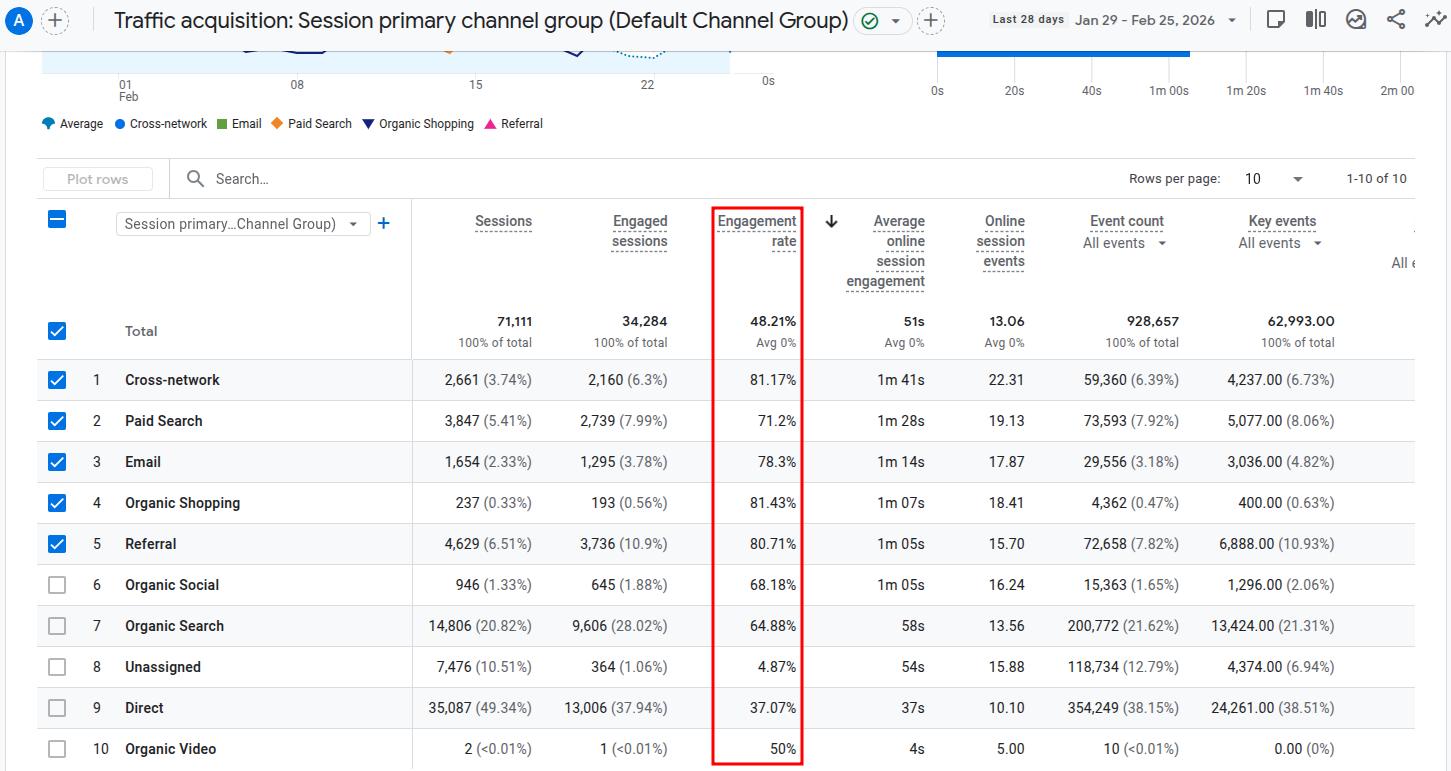

To check behavioral signals in GA4, navigate to ‘Reports > Engagement > Pages and Screens’. Sort by page views to start with your highest-traffic pages. For each one, check:

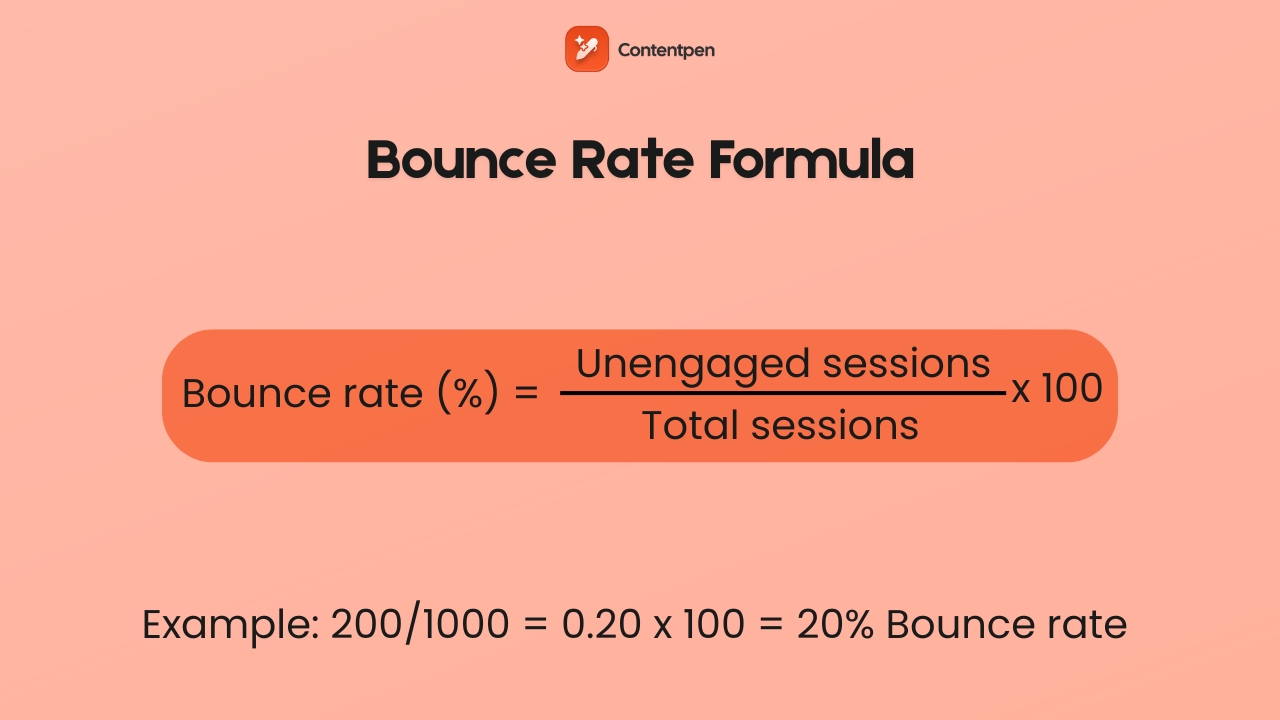

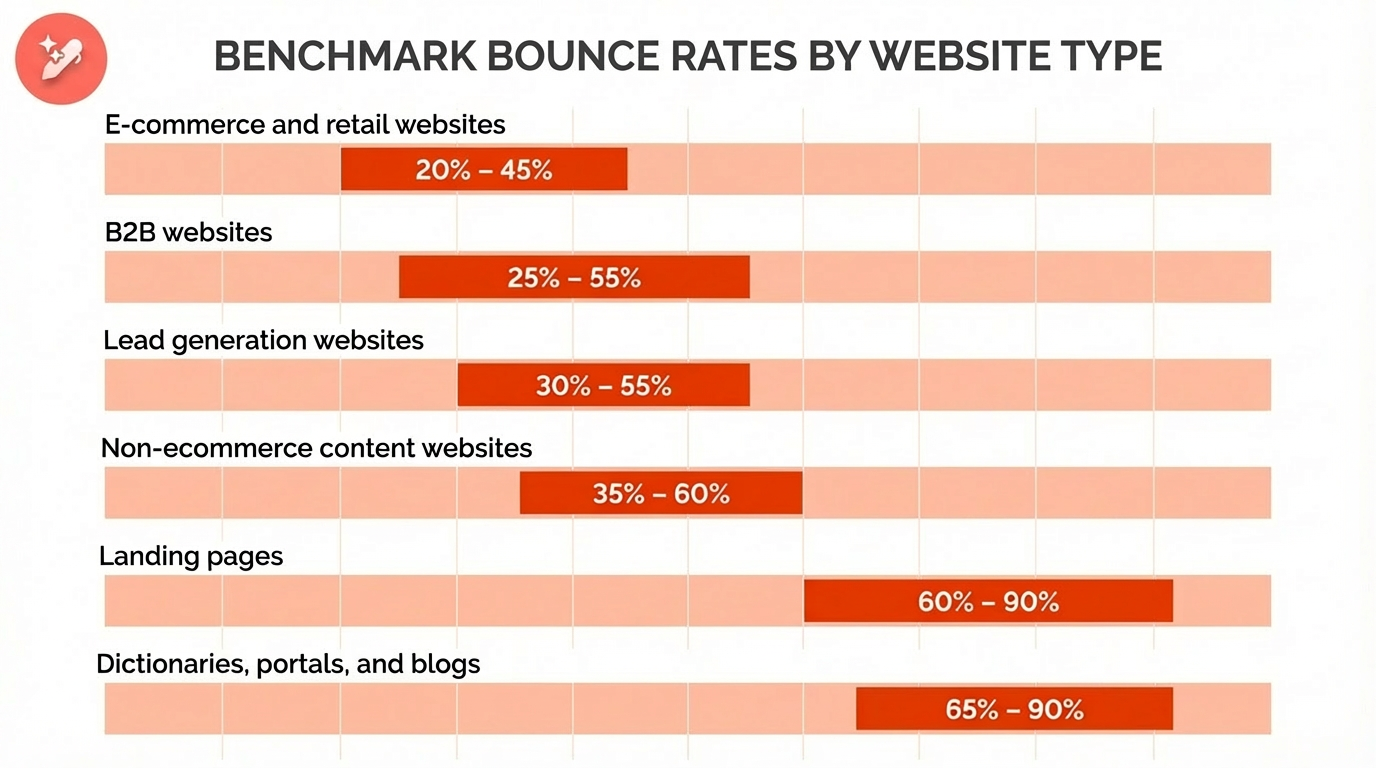

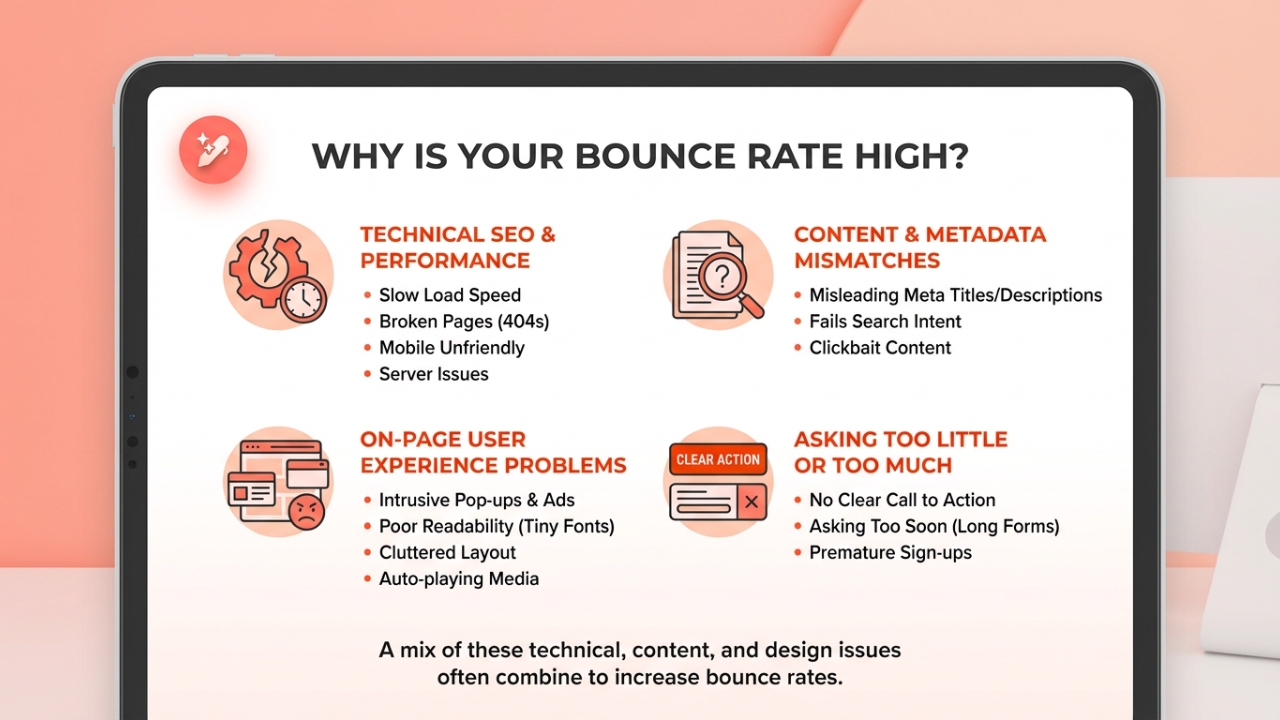

Engagement Rate

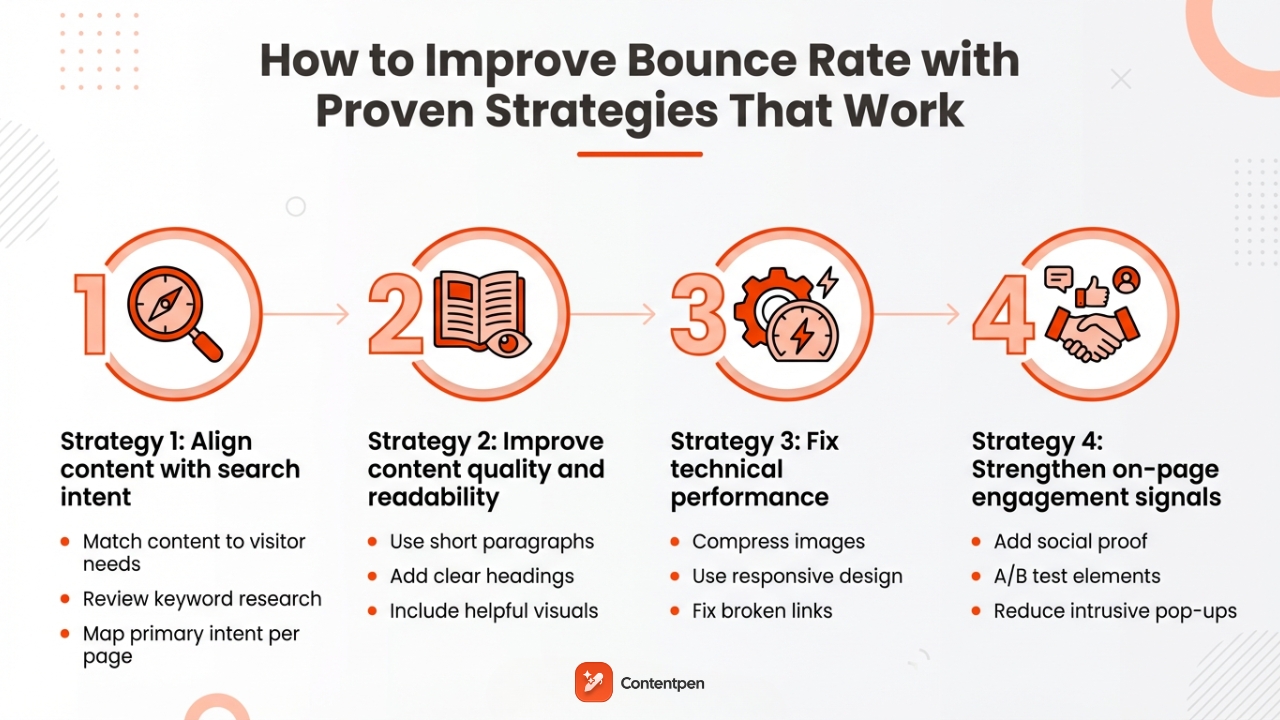

GA4 replaced the old bounce rate metric with engagement rate. An engaged session is one where the user spent at least 10 seconds on the page, viewed more than one page, or triggered a conversion event. Aim for an engagement rate above 60% on your key pages.

Average Engagement Time per Session

This is the active time a user spends on your page, not total time on site. A low engagement time on a long-form article (e.g., under 60 seconds on a 2,000-word page) is a strong signal that the user isn’t consuming enough of your content.

Landing Page Performance

Under ‘Reports > Acquisition > Traffic Acquisition’, filter by organic search. Look at which pages users land on from search and then immediately exit. These are pages where your SEO is working (Google is sending people), but your content is failing (people are leaving).

Scroll Depth Events (if configured)

If your GA4 has scroll depth tracking enabled, check the percentage of users scrolling past the 50% and 90% mark on your most important pages. Pages with poor scroll depth beyond the 50% mark suggest that your content loses the reader halfway through.

Cross-reference everything you find here with the Search Console data from Step 1. Then move on to the next steps of the SEO audit checklist.

Step 3: Check that crawlers can access your site

Crawlability is the foundation of everything else. Search engines and AI platforms use automated bots to discover and index your content. If those bots are blocked, your site is invisible regardless of how well everything else is optimized.

Start by entering yourdomain.com/robots.txt. Your robots.txt file tells crawlers what they can and cannot access. A file that looks like this is correct:

User-agent: *

Allow: /

Disallow: /admin/A file that looks like this is a catastrophe:

User-agent: Googlebot

Disallow: /The second example blocks Google from your entire site, and not just the unwanted pages.

In 2026, make sure you are not accidentally blocking retrieval bots like OAI-SearchBot or PerplexityBot. Blocking these types of crawlers makes your content invisible to AI platforms when they are answering user questions.

Crawlers to verify are not blocked:

- Googlebot: Google’s main search crawler

- BingBot: Bing’s crawler, which also influences Microsoft Copilot results

- OAI-SearchBot: Used by ChatGPT for real-time citations

- PerplexityBot: Perplexity’s search crawler

Step 4: Check for duplicate versions of your site

Duplicate pages silently dilute SEO authority on a surprising number of sites, especially ones that have been through migrations or CMS changes.

Your site should be accessible at exactly one URL. Test all four of these in a browser:

- http://yourdomain.com

- https://yourdomain.com

- http://www.yourdomain.com

- https://www.yourdomain.com

Only one should load a live site. The other three should redirect automatically to a single link using 301 redirects. If multiple versions load successfully, Google may treat them as separate sites and split your authority between them.

Also, always use the HTTPS version as your canonical. Beyond the marginal ranking benefit, it is a basic security expectation that users and browsers now treat as standard.

Step 5: Crawl your site for technical errors

A site crawl reveals the technical issues that are invisible in a browser but clearly visible to Googlebot. These may include broken links, redirect chains, missing title tags, orphan pages, duplicate content, and other problems that secretly diminish rankings.

Run Screaming Frog on your site. If your site has fewer than 500 pages, the free version covers everything. The data it returns is how search engines actually experience your site, not how you see it in Chrome.

Issues to prioritize during the Screaming Frog crawl:

- Broken internal links (4xx errors): Internal links pointing to pages that no longer exist waste crawl budget and create a dead end for both users and search engines.

- Redirect chains: A chain like A → B → C → D dilutes link equity with every hop and slows down crawling. Flatten anything longer than one redirect to a direct 301.

- Orphan pages: Pages with no internal links pointing to them are rarely prioritized by search engines. Find orphan pages by comparing your sitemap URLs against what Screaming Frog discovered via crawl.

- Duplicate title tags: Two pages with identical title tags signal to Google that your content may be redundant. Fix or differentiate each one clearly.

- Missing canonical tag: Pages that should be consolidated under a canonical URL but are not create indexing ambiguity. Set canonicals explicitly.

- Sitemap issues: Your XML sitemap should only contain indexable pages. Screaming Frog will flag redirect URLs, noindex pages, and broken links that have crept into your sitemap over time.

Step 6: Verify indexing status

Crawling and indexing are two different things. A crawler can visit a page and still choose not to index it. Open Google Search Console and navigate to the ‘Pages report’ under indexing options.

Categorize the pages that need your attention:

- “Crawled”: Google visited these pages but decided they were not worth indexing. This almost always means thin content, near-duplicate content, or low-value pages.

- “Discovered”: Google found these pages but has not gotten around to crawling them yet, typically because they are considered low priority. Stronger internal links pointing to these pages often resolve this within a few weeks.

- “Blocked by robots.txt.”: Confirm these are intentionally blocked. Accidental blocks after migrations are extremely common within websites.

If you don’t have a lot of pages, you can run the site:yourdomain.com operator in Google and compare the result count to your actual page count. This method may take a while, but you can clearly identify any large discrepancy between the two numbers.

Step 7: Test mobile-friendliness

Google uses the mobile version of your site for indexing and ranking. Desktop performance is secondary. A site that looks perfect on a 27-inch monitor but breaks on a phone will underperform in search, regardless of content quality.

Use Bing’s Mobile Friendliness Test Tool for a quick pass on any URL. Also, check the ‘Mobile Usability report’ in Google Search Console to identify site-wide issues.

The most common mobile problems are the content text being too small to read without zooming, touch targets too close together to tap accurately, or viewport configuration errors.

If your site is on WordPress, most modern themes are mobile-optimized by default. Custom-built sites should implement responsive design principles that serve the same HTML to all devices while adjusting the layout via CSS.

Step 8: Evaluate site speed and Core Web Vitals

Site speed is both a ranking factor and a user experience issue. A slow site costs you in search and in conversions. Users who click through from an AI citation and land on a page that takes five seconds to load will leave before they read a word.

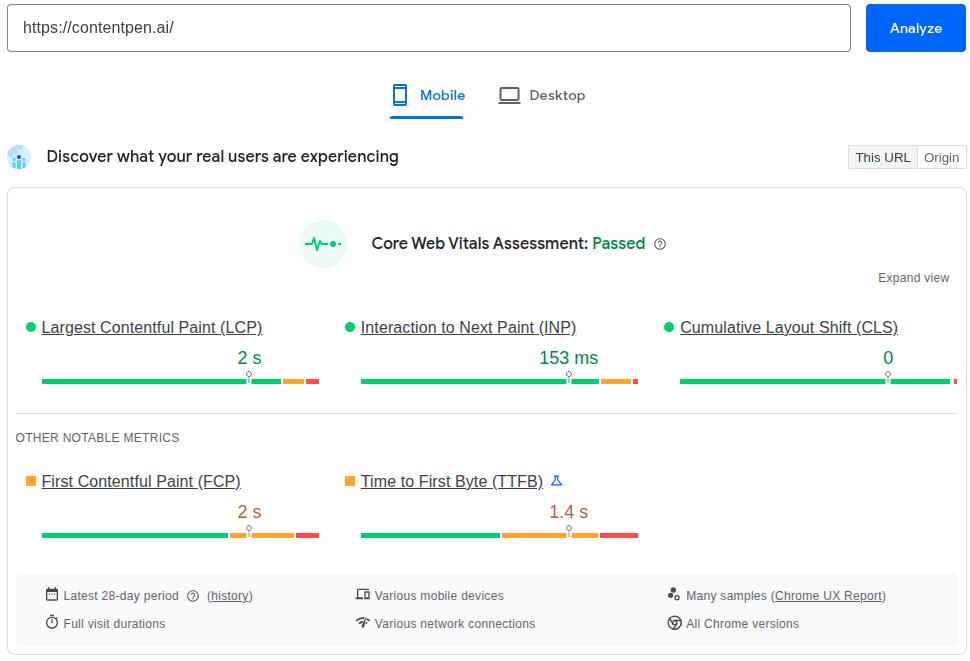

Therefore, you must run your most important pages through Google PageSpeed Insights. The three metrics to target are:

| Metric | Target | What It Measures |

| Largest Contentful Paint (LCP) | Under 2.5 seconds | Main content loading speed |

| Interaction to Next Paint (INP) | Under 200ms | Responsiveness to user interaction |

| Cumulative Layout Shift (CLS) | Under 0.1 | Visual stability during page load |

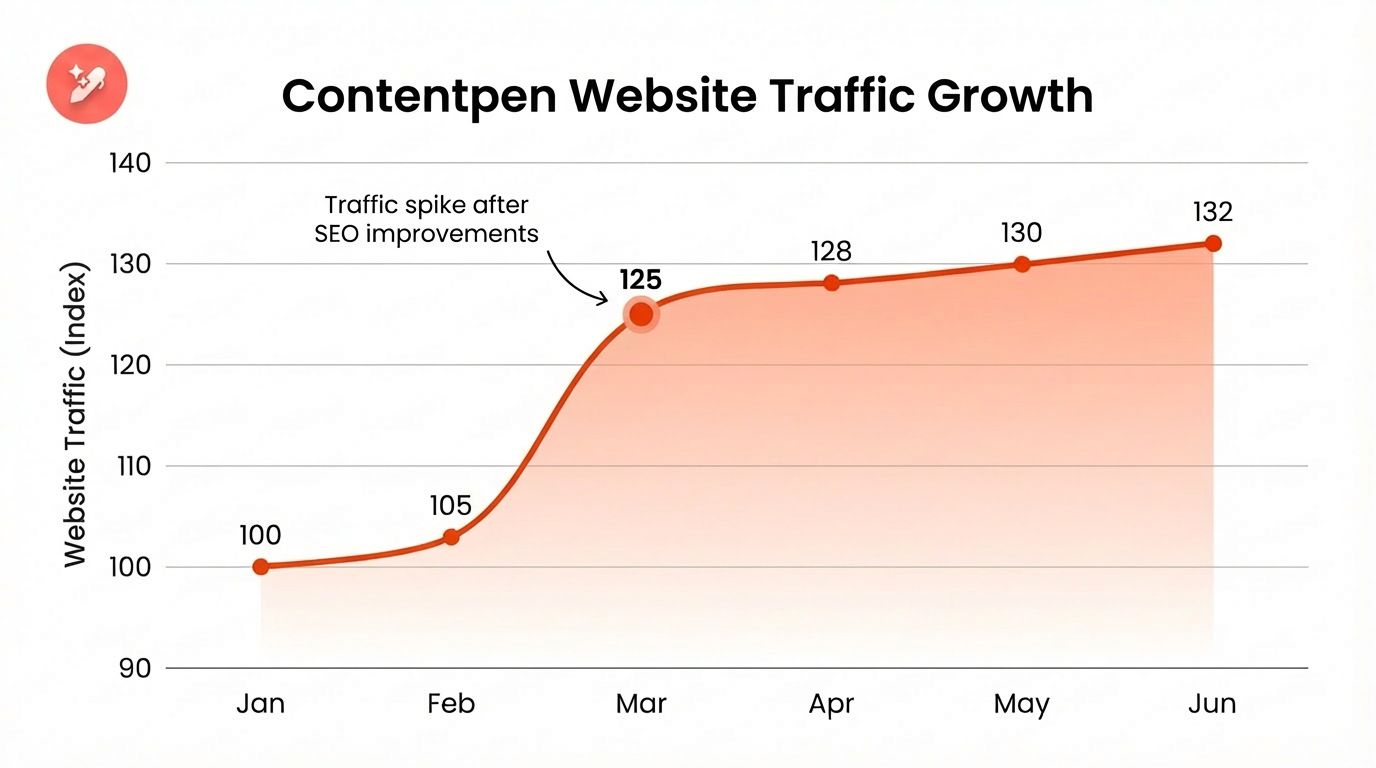

You can take a quick look at Contentpen’s test in Google PageSpeed Insights for a quick review of the test and its related results.

Always test on mobile settings. Chasing a perfect 100 score is not necessary. Getting your key pages into the “Good” range across all three metrics is the goal.

Quick tip:

For LCP, the most common culprits are unoptimized hero images and render-blocking JavaScript. For CLS, always specify explicit width and height on images and video embeds. For INP, minimize third-party script execution.

Step 9: Review on-page SEO elements

On-page SEO is how you communicate to search engines what each page is about. Get the fundamentals wrong, and even excellent content can underperform. Do them right, and you create a compound effect where good content becomes visible content.

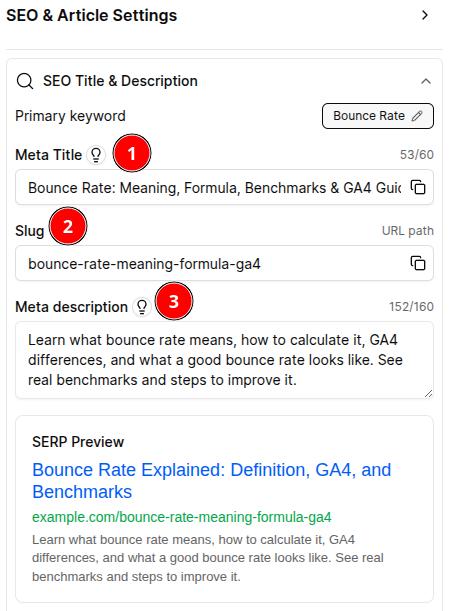

Audit these elements for every important page:

- Title tags: Keep them under 60 characters, unique across the site, with the primary keyword near the front. Duplicate titles are often a symptom of keyword cannibalization.

- Meta descriptions: Keep them between 130 and 150 characters. These are not a direct ranking factor, but they directly influence click-through rate. Write them like a one or two-line ad for the page, summarizing everything that you’ll cover on it in clear language.

- Header structure: Use one H1 per page that clearly defines what the page covers, with a logical H2/H3 hierarchy throughout. Proper heading structure is one of the most reliable paths to featured snippet eligibility.

- URL slugs: Keep them short, lowercase, hyphen-separated, and descriptive. /what-is-geo is good. /blog/post?id=4827&category=seo is not.

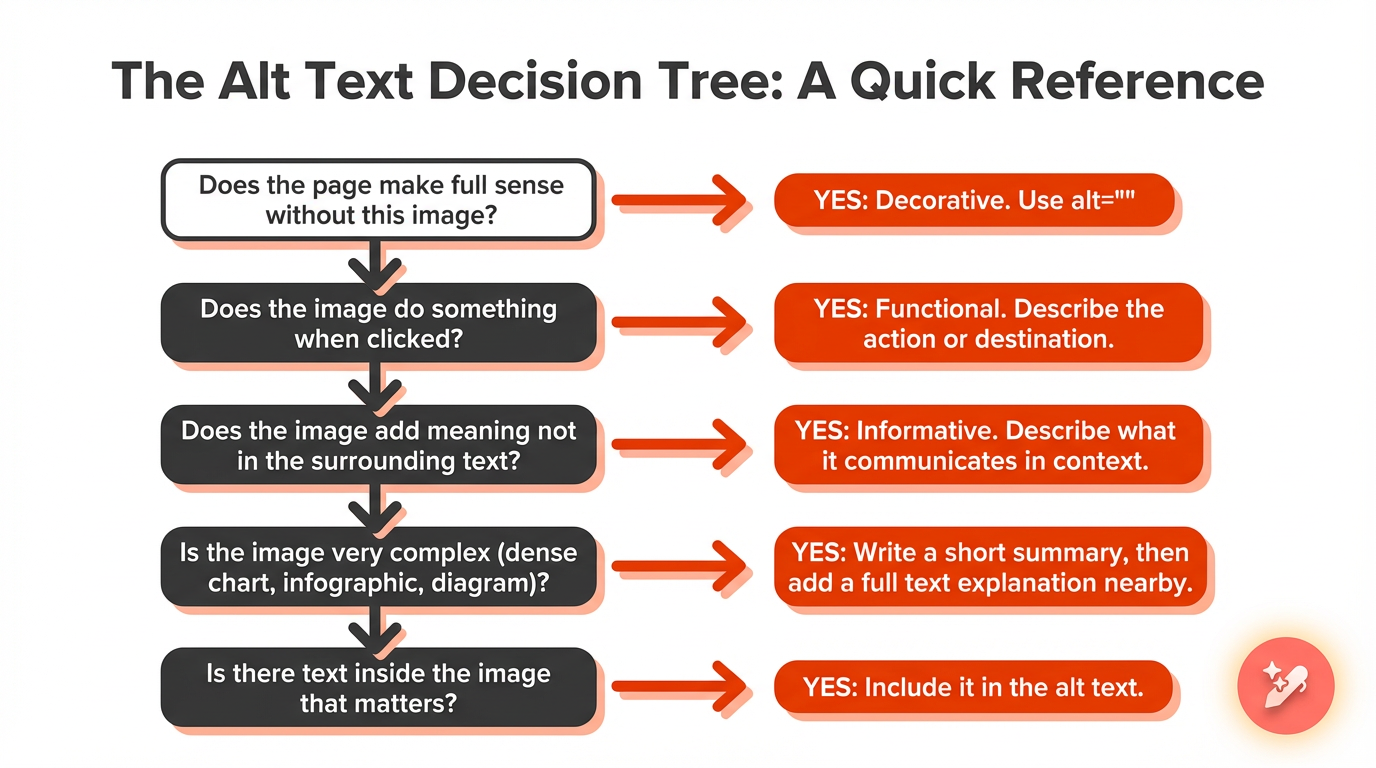

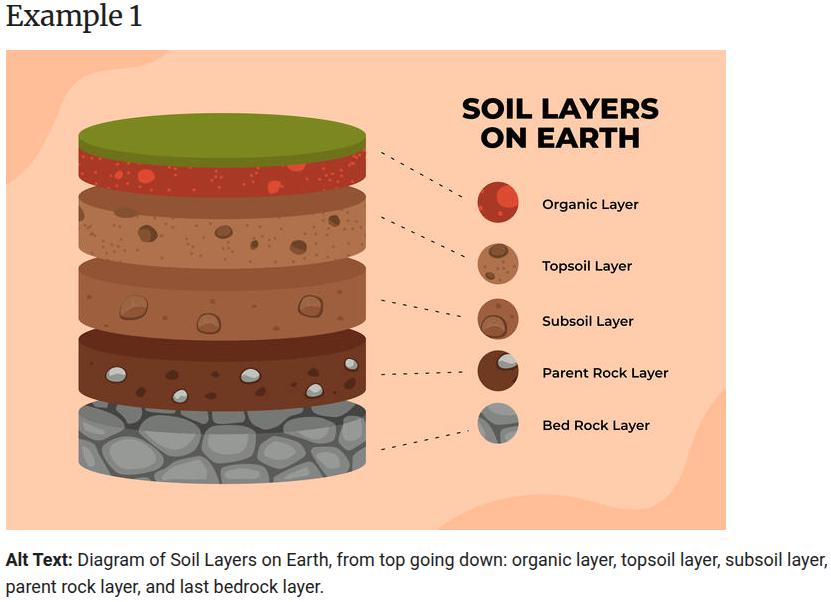

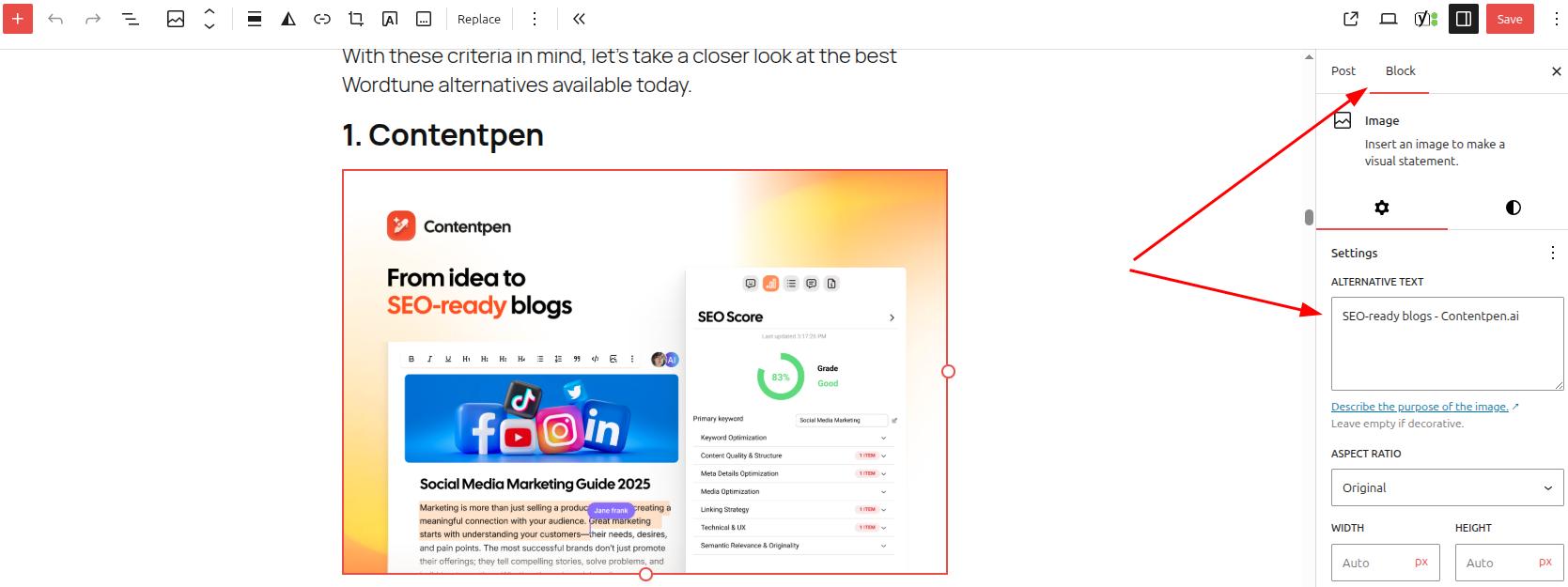

- Image alt text: Every image needs descriptive alt text. This helps visually impaired users and search engines understand the image, and contributes to image search visibility.

- Keyword placement: Your primary keyword should appear naturally in the first 100 words. Use related keyword variations for better semantic SEO and to signal topical depth. However, always avoid stuffing keywords.

For large sites, auditing every page manually is impractical. Tools like Semrush’s On Page SEO Checker can export all of these elements in bulk so you can sort and identify issues across hundreds of URLs at once.

Step 10: Conduct a content quality audit

This is the step that drives the most growth and the one that most people rush through. Give it the time it deserves.

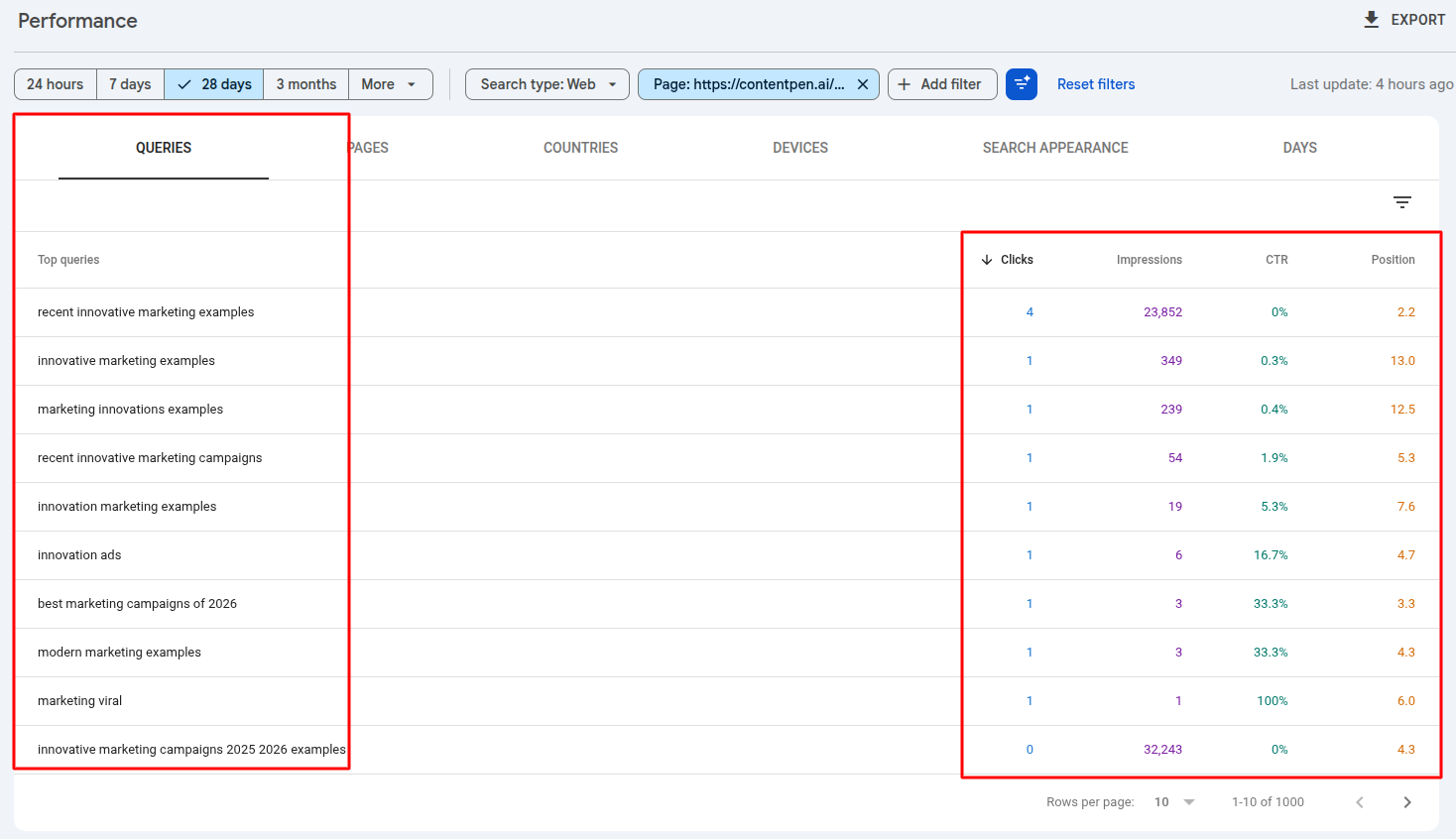

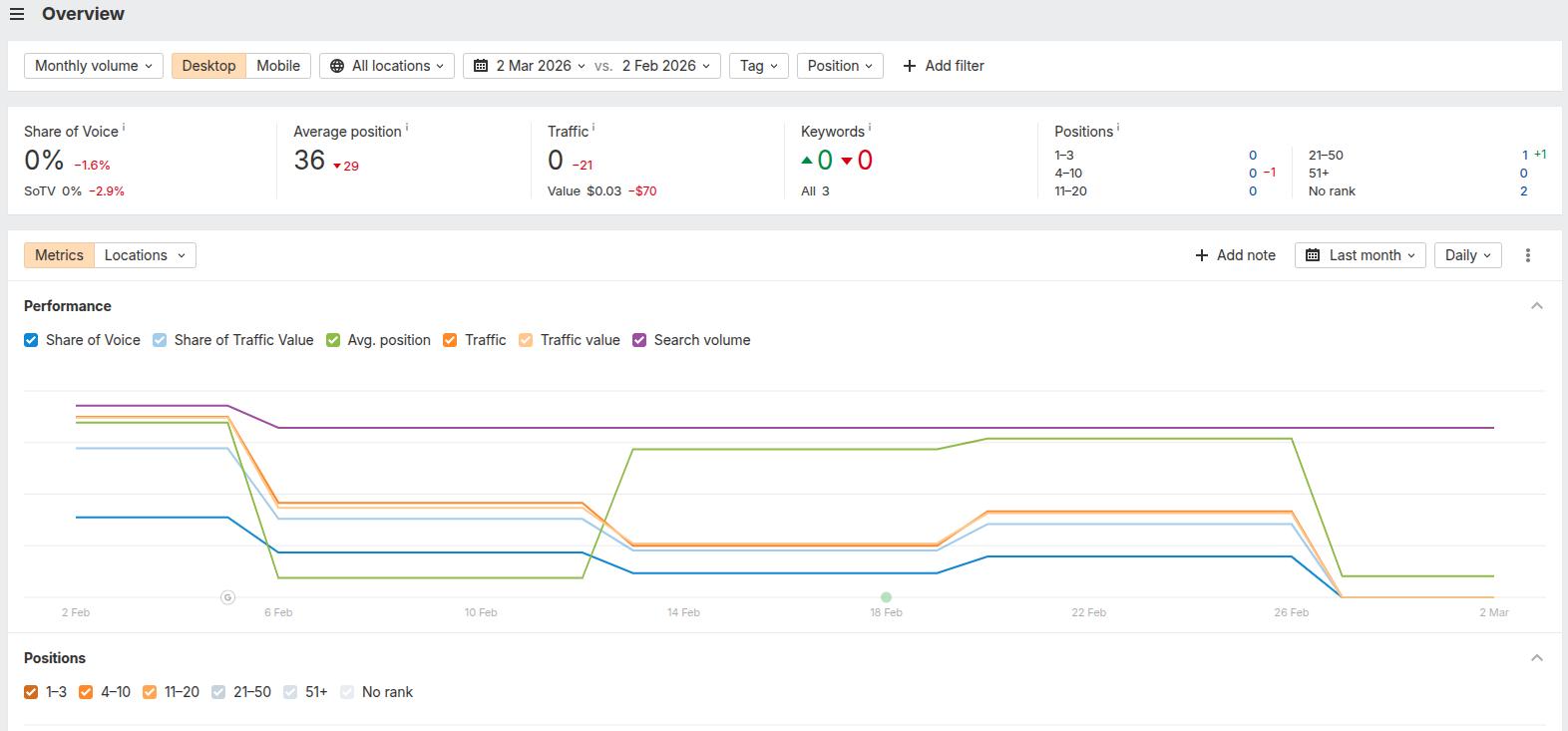

Open Google Search Console and navigate to the ‘Search Results report’. Set the date range to the last 12 months. Sort by impressions. Look for two categories:

High impressions, low CTR

Your content is appearing in search, but is not getting clicked. This usually means your title tag or meta description is weak, or your result is losing to a competitor’s featured snippet. Fix the on-page elements first.

Declining Year-over-Year (YOY) traffic

Pages where both impressions and clicks have dropped compared to the same period last year are your decaying pages, and they are almost always the fastest opportunities to recover.

For each priority page, ask:

- Does this fully cover the topic, or are there subtopics that competitors address that we do not?

- Is the data, statistics, and advice current, or has it aged out?

- Does it match how people are searching today, or has search intent shifted since we wrote it?

Then sort every page into one of four action categories:

| Action | When to take it |

| Update | Good foundation for the content; needs fresh data, new sections, or added depth |

| Rewrite | The premise is sound, but the execution is poor, or the intent has shifted over the years |

| Consolidate | Multiple weaker pages are competing for the same keyword |

| Remove + Redirect | Low value with no realistic path to improvement |

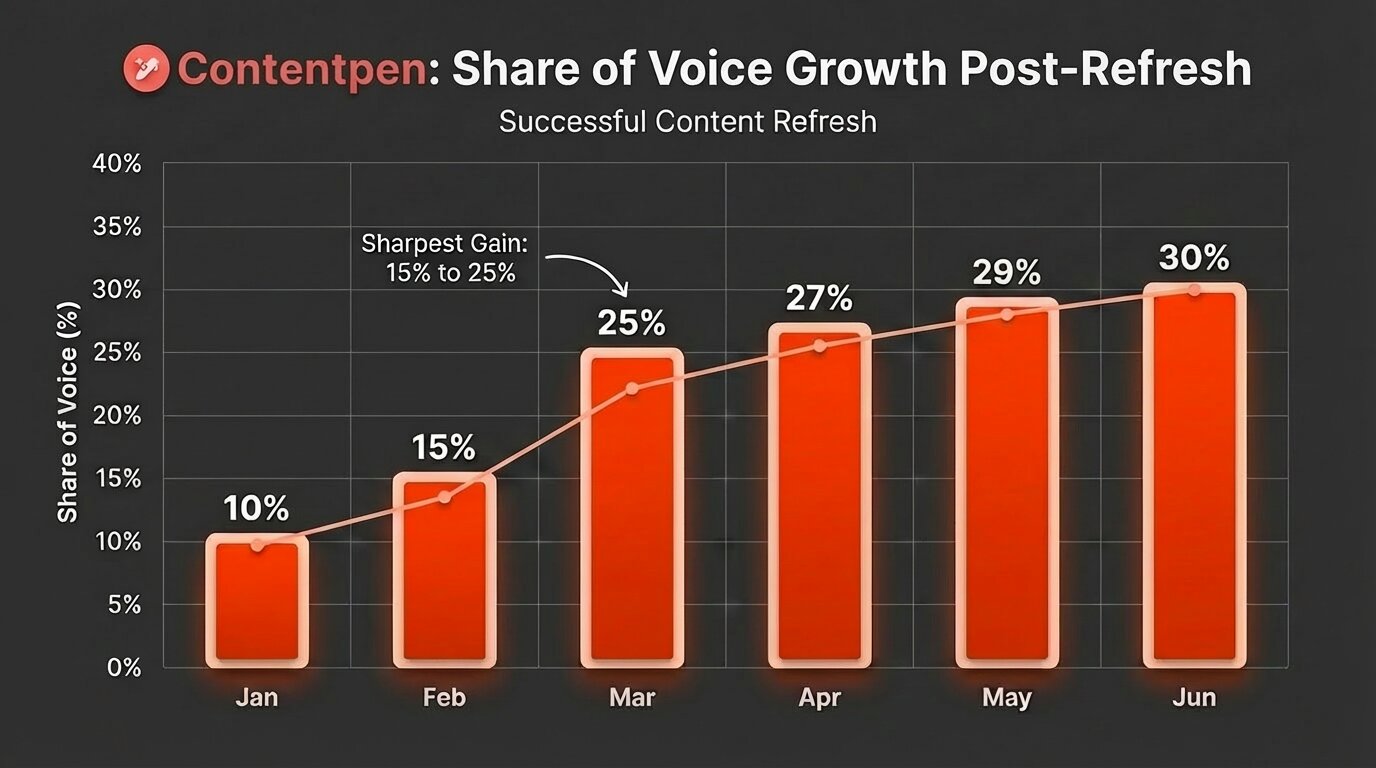

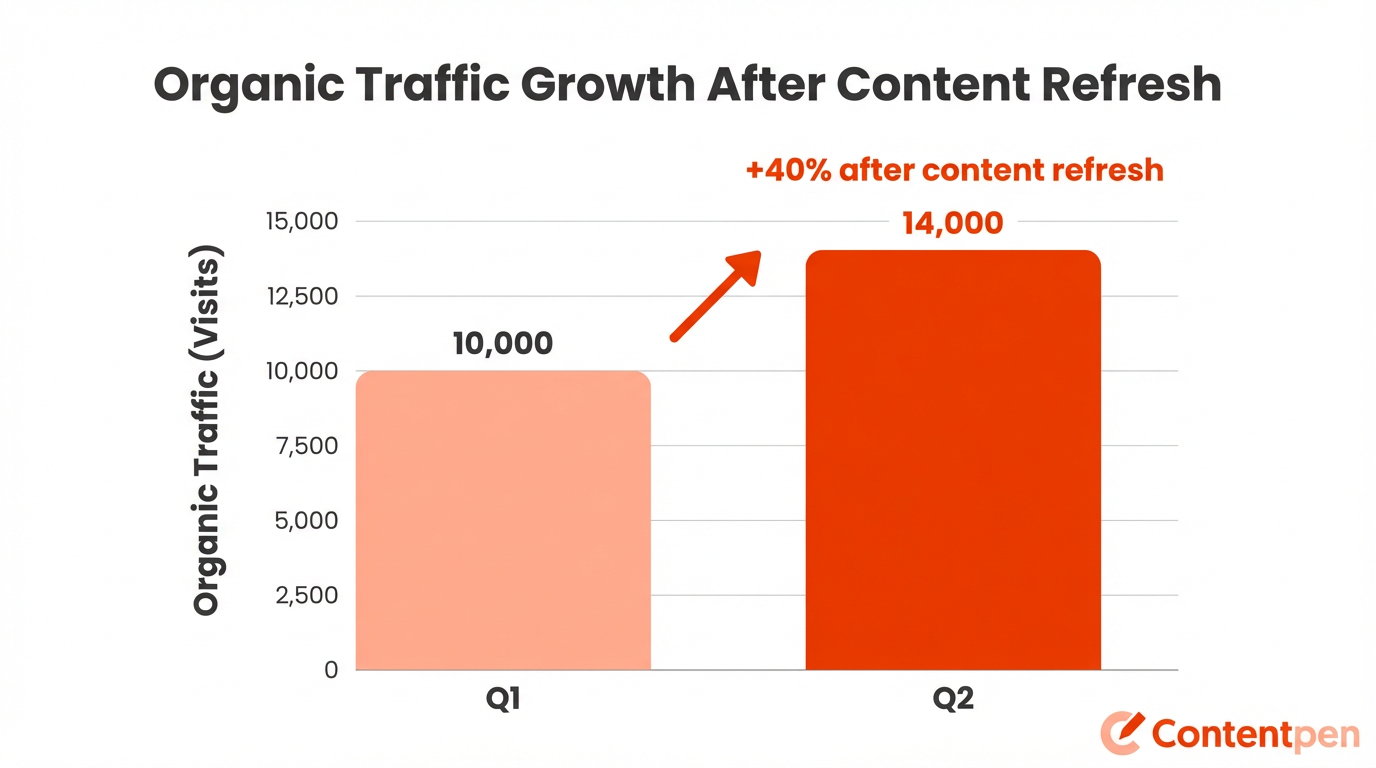

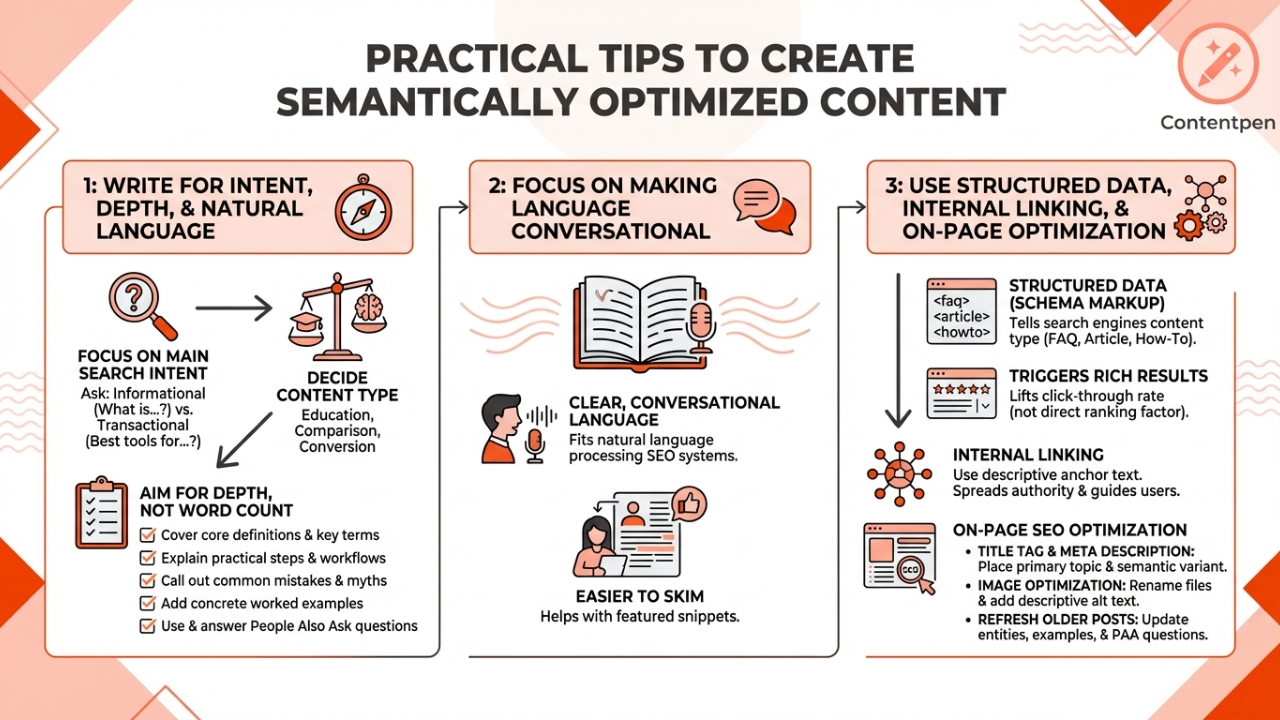

Why refreshing existing content beats publishing new content

Refreshing a page that already has some authority almost always outperforms publishing something brand new.

A page sitting in positions 8 to 15 for a competitive keyword already has indexing history and maybe some backlinks. It often needs targeted updates, such as updating stale statistics and adding proper FAQ schema.

You should also restructure content for featured snippets and AI citations by giving direct 40-60 word answers for questions in your blog and articles.

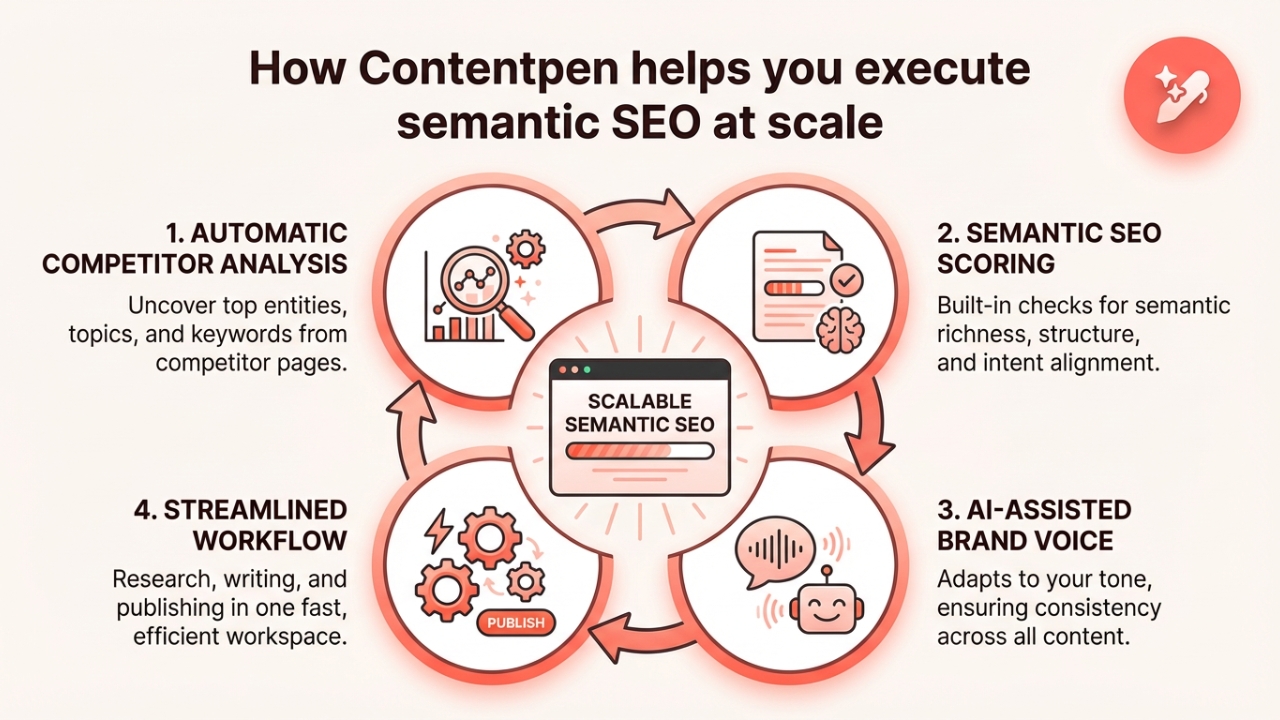

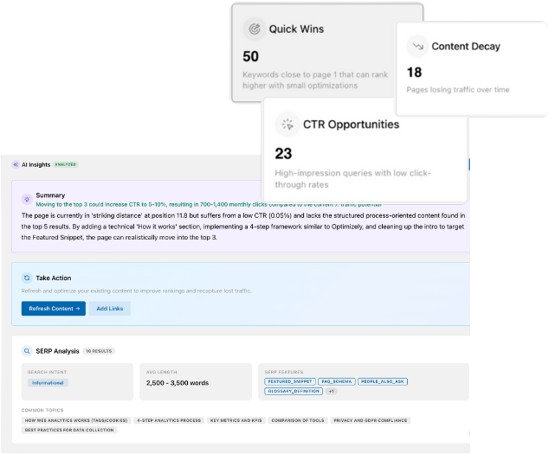

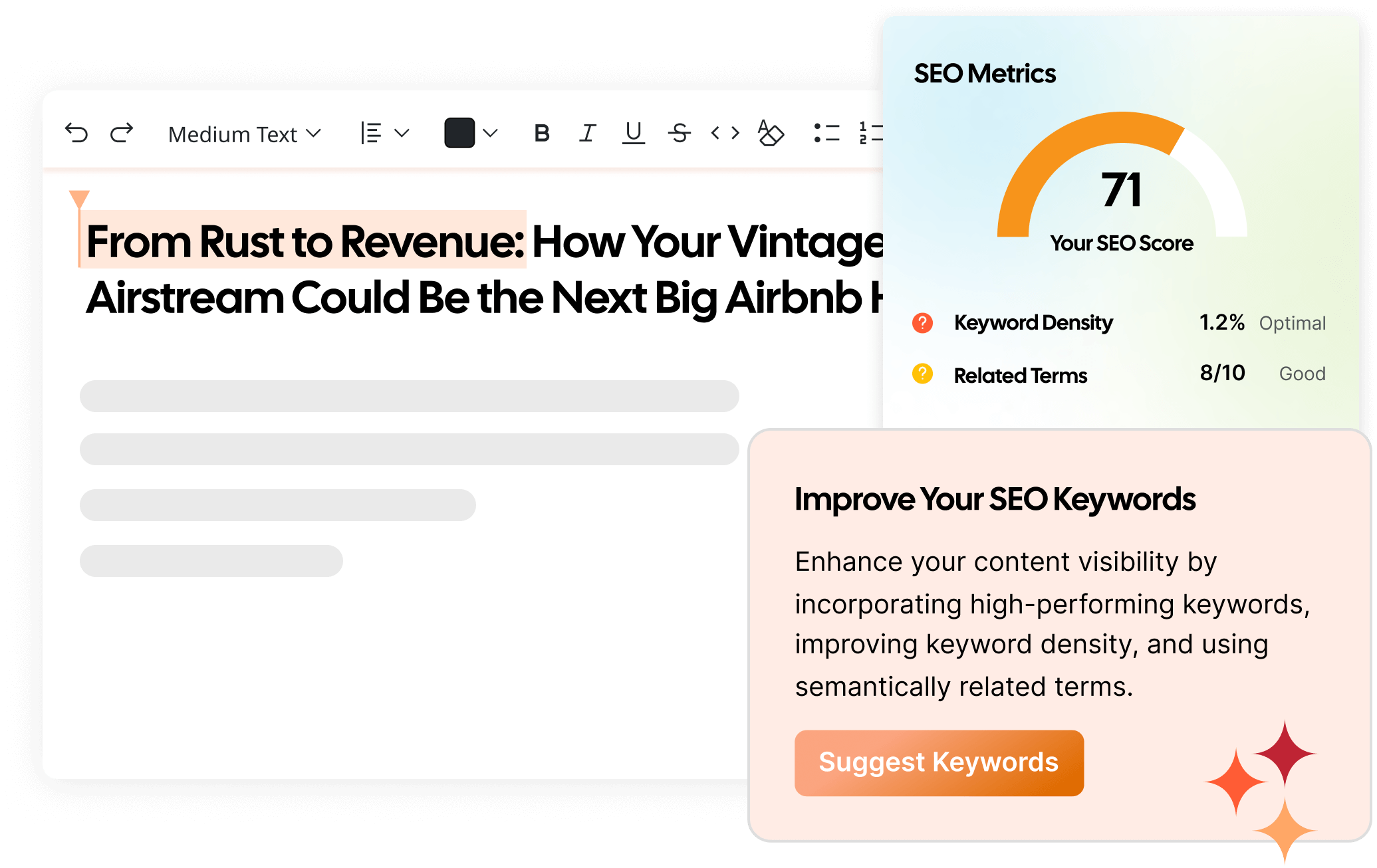

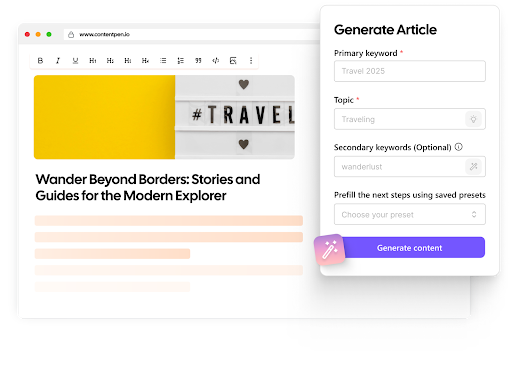

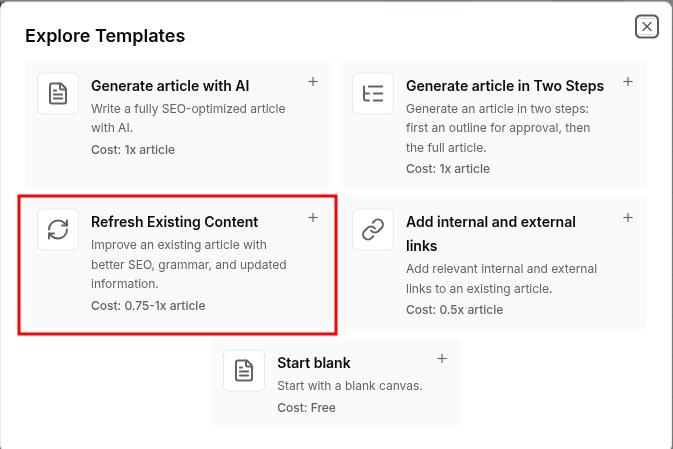

This is exactly where you need an SEO platform and an AI writer like Contentpen. Our tool offers a ‘Refresh Existing Content’ mode that is built to accelerate the content updating process so that you don’t start from scratch.

Rather than spending hours manually combing through competitor SERPs to map the gaps, it surfaces them for you automatically. The tool also offers a 7-day free trial so that you can produce your SEO- and GEO-optimized articles with ease and see the results for yourself.

Update existing content without rewriting it that fills them

Improve outdated posts using live URL analysis

Refresh SEO, structure, and relevance in minutes

Step 11: Check your E-E-A-T signals

Experience, Expertise, Authoritativeness, and Trustworthiness are the lenses Google uses to evaluate content quality, especially for sensitive industries. E-E-A-T is not a technical metric you can check with a crawler. It is a judgment call based on signals spread across your site.

You should mainly audit for:

- Author credentials: Do your key articles have a named author with a bio that establishes their relevant expertise? Anonymous content or generic “Staff Writer” bylines are a weakness here.

- Updated dates: Content without a visible last-updated date looks stale. A visible update date that reflects when you actually reviewed the content demonstrates content freshness.

- Original data and examples: First-hand evidence is the ‘Experience’ part of E-E-A-T. Screenshots, original research, case studies, and real-life examples all signal to Google that a human who has actually done the said process wrote this content.

- External sources: Citing authoritative external sources adds credibility. Link out to ‘.edu’ platforms or sites with high Domain Authority to genuinely help the reader and show search engines that you only consider top-authority pages to cite information.

- About and contact information: A clear ‘About’ page, identifiable leadership, and real contact information establish the ‘Trust’ dimension.

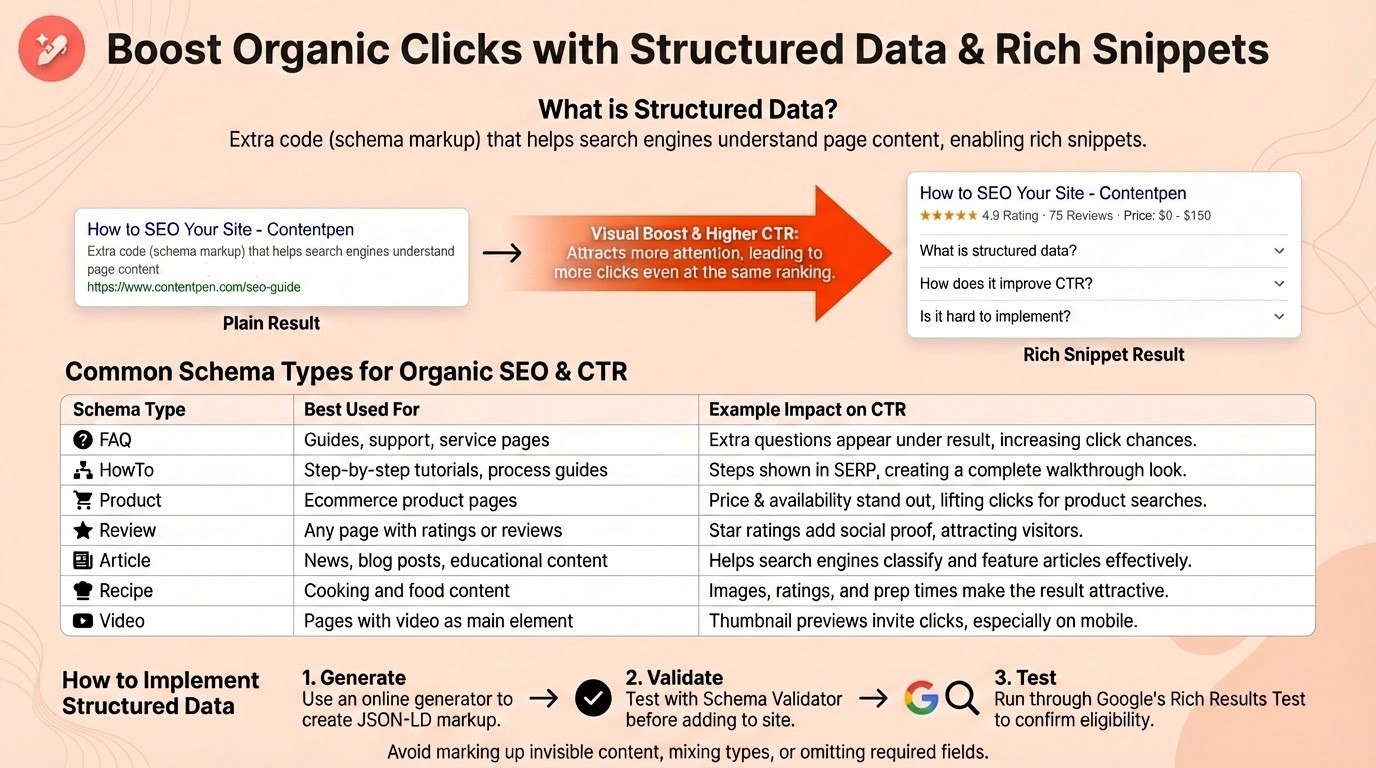

Step 12: Check structured data and schema markup

Structured data is how you give search engines and AI systems explicit, machine-readable context about your content. It does not directly boost rankings, but it dramatically improves how your content is understood and represented online.

Use Google’s Rich Results Test to validate your current implementation. The schema types that matter most include:

- FAQPage: Marks up question-and-answer sections. This is one of the highest-value schema types for both traditional featured snippets and AI citation. Add FAQ sections to your key pages and mark them up correctly.

- HowTo: For step-by-step guides like this one. When marked up properly, Google can display the steps directly in search results, improving click-through rate meaningfully.

- Article: Signals content type, publication date, author, and organization. Helps AI systems accurately represent your content.

- BreadcrumbList: Helps search engines understand your site hierarchy and often results in breadcrumb display in search results.

- LocalBusiness: Essential for local SEO. Add with complete, accurate contact information.

Fix any validation errors the Rich Results Test surfaces. An invalid schema implementation is worse than no schema. It generates structured data errors in Search Console and signals a poorly maintained site.

Step 13: Audit your AI visibility and brand representation

AI answer engines are now a meaningful traffic source. ChatGPT, Perplexity, and Google’s AI Mode are growing rapidly. If your brand or content is not being surfaced or cited accurately in these systems, you are missing an increasingly important traffic channel.

Benchmark your AI visibility

Manually test AI platforms with queries relevant to your business. For instance:

- “What is [your company]?”

- “What are the best [your product category] tools?”

- “How do I [solve the problem your product addresses]?”

Document whether your brand appears, how prominently, and whether the description provided by the AI platform is accurate.

If AI chatbots are describing your product incorrectly, the fix usually starts on your own site. Update your ‘About Us’ page, product descriptions, and homepage copy with accurate, current information that AI systems can easily retrieve.

Check topic association

If you sell SEO software but AI only mentions you for “content marketing tools” and never for “keyword research” or “backlink analysis,” it means you have topic association gaps. Close them by creating or updating content that clearly positions you in those missing topic areas.

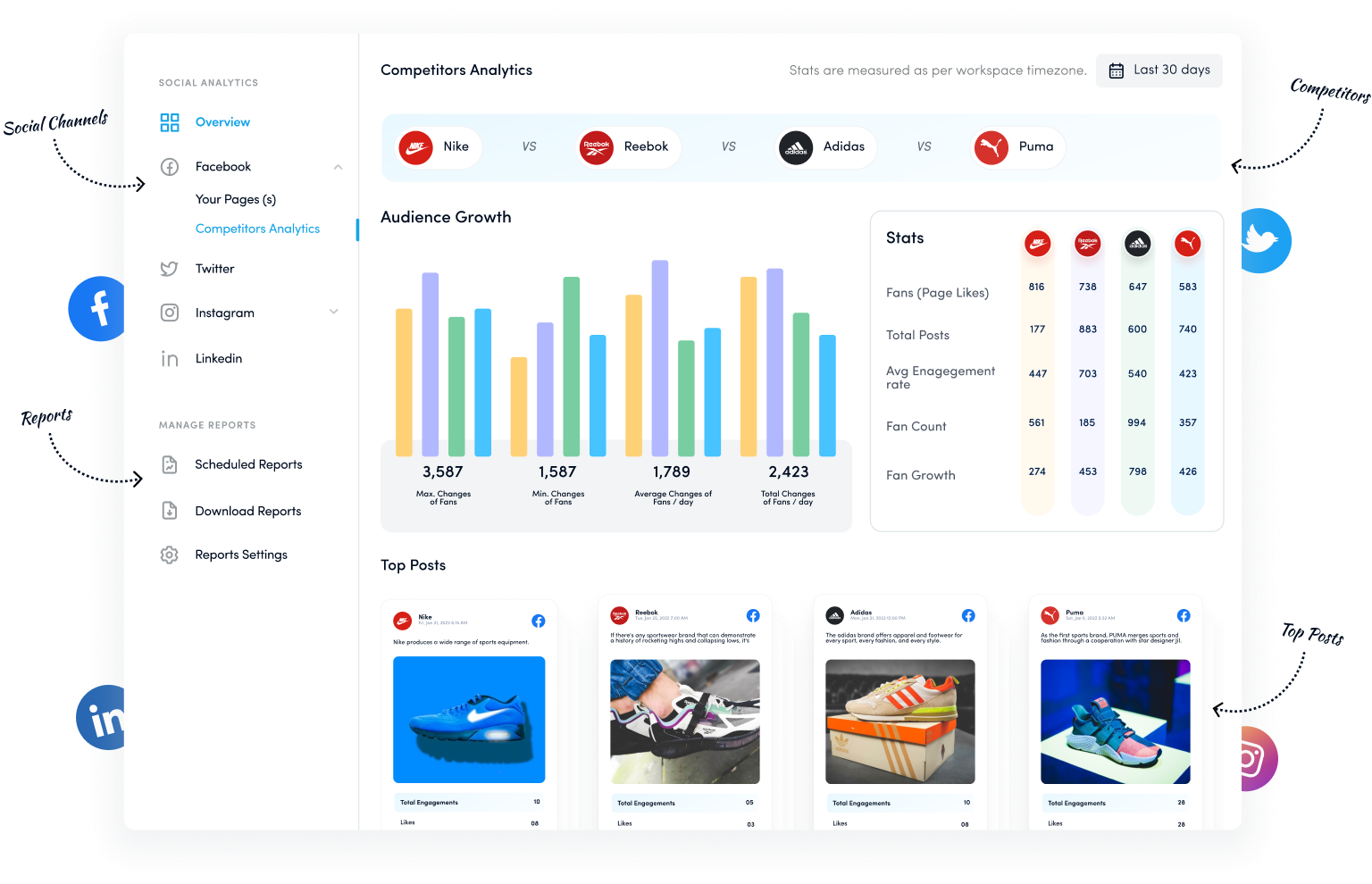

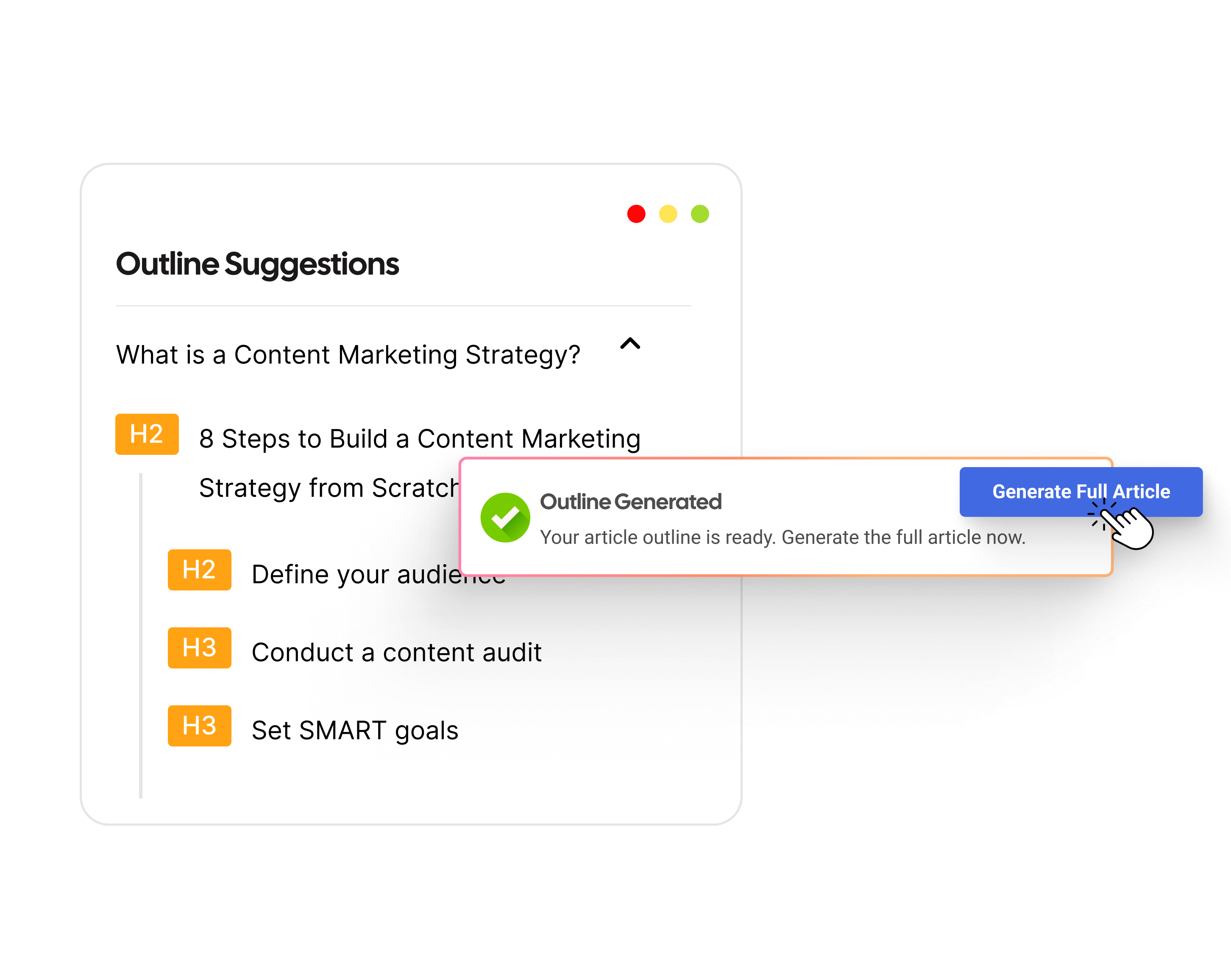

You can use Contentpen for this task, which helps in generating and publishing high-quality blogs from start to finish with the least hassle.

From outline to publish-ready content that fills them

Structured

Consistent

SEO-aligned

Fast

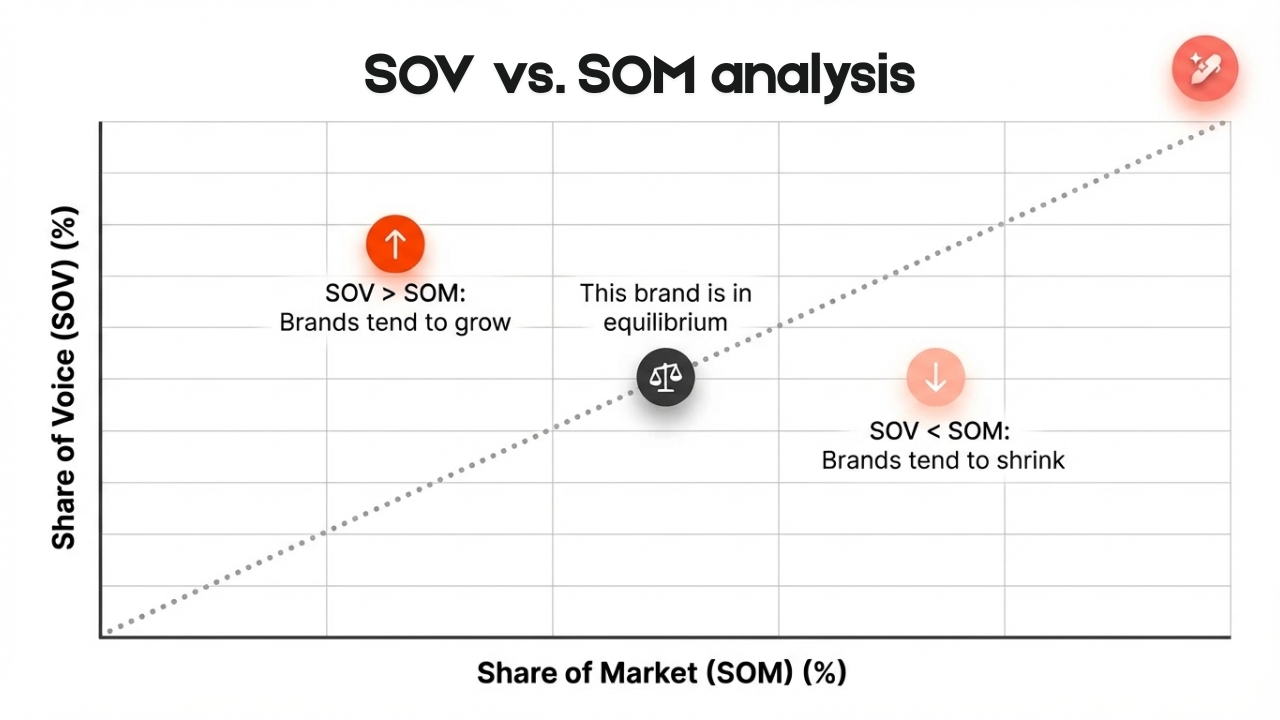

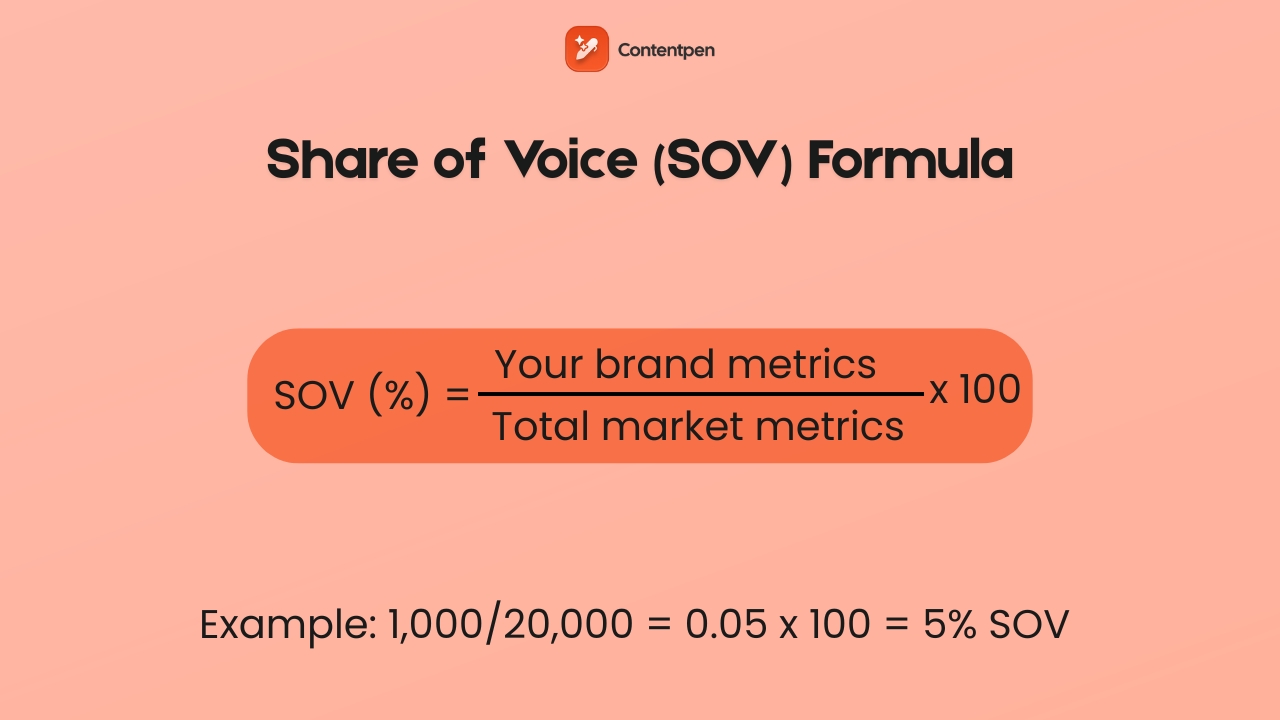

You can also utilize the tool’s clustering feature to create topical authority and increase share of voice in a niche.

Structure Content for AI citation

AI systems retrieve content in chunks. They favor content that leads with clear, direct answers. Therefore, make your content with labeled sections and digestible, quotable segments that appear directly in ChatGPT, Perplexity, Gemini, and other AI discovery platforms.

Step 14: Conduct a backlink audit and competitor analysis

Your backlink profile is your site’s reputation in Google’s eyes. Strong, relevant links from authoritative sources help you rank. Spammy, manipulative, or irrelevant links can suppress rankings and, in serious cases, trigger manual penalties.

Analyze your link profile

Pull your backlink data from Ahrefs or Semrush. Look for:

- Anchor text distribution: A healthy profile has most links using branded anchors, naked URLs, or generic phrases. Many exact-match keyword anchors across low-quality sites look manipulative.

- Link quality: A link from a respected industry publication carries more weight than a hundred links from content farms. Check what your link quality suggests from the respective SEO tools.

Identify and disavow toxic links

Use Google’s Disavow Tool conservatively. Disavowing legitimate links by mistake can hurt you. Disavow at the domain level for obvious spam sources, which may include link farms, PBNs, and unrelated foreign-language sites.

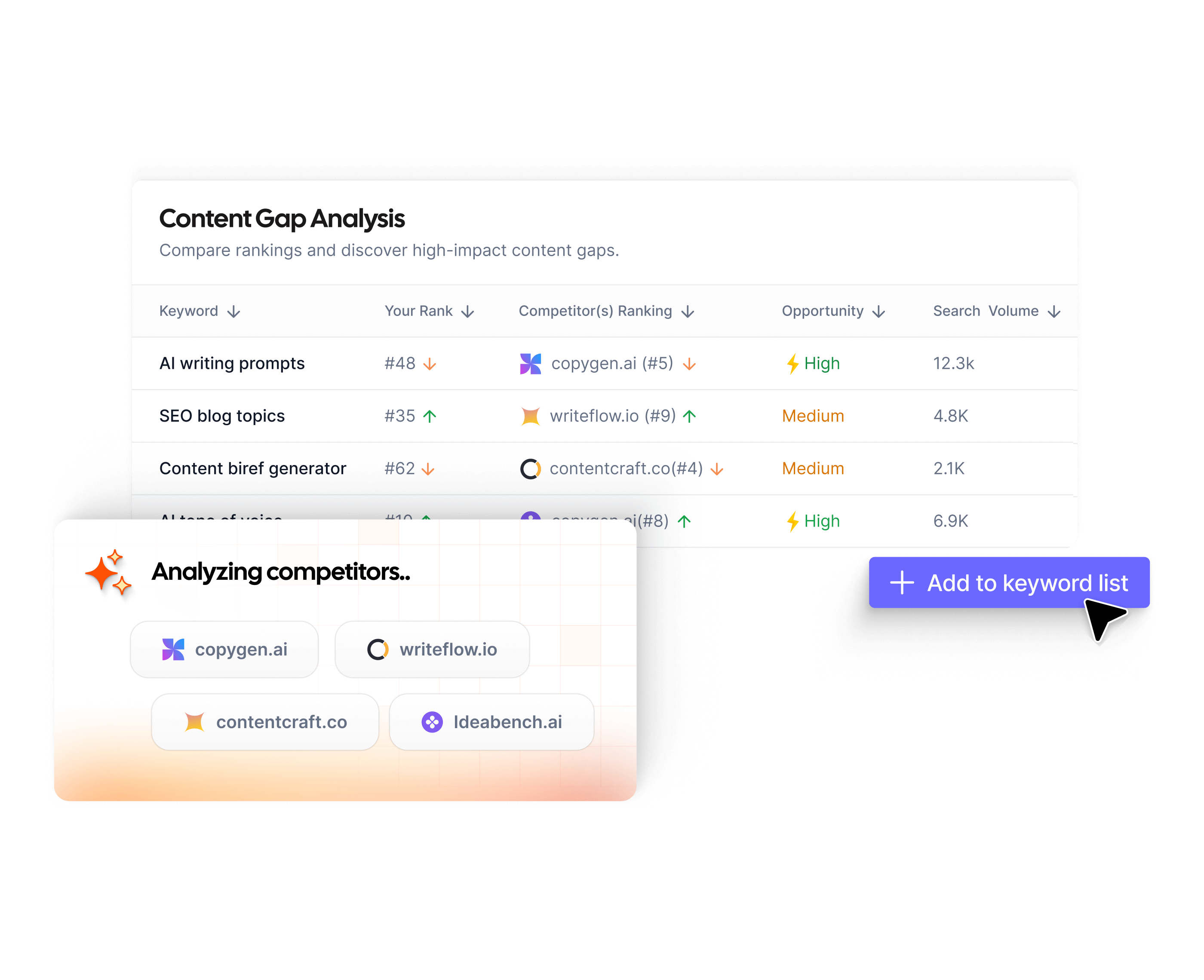

Run a competitor backlink gap analysis

Find sites that link to your competitors but not to you. These are your warmest outreach targets as they have already demonstrated a willingness to link to content on your topic.

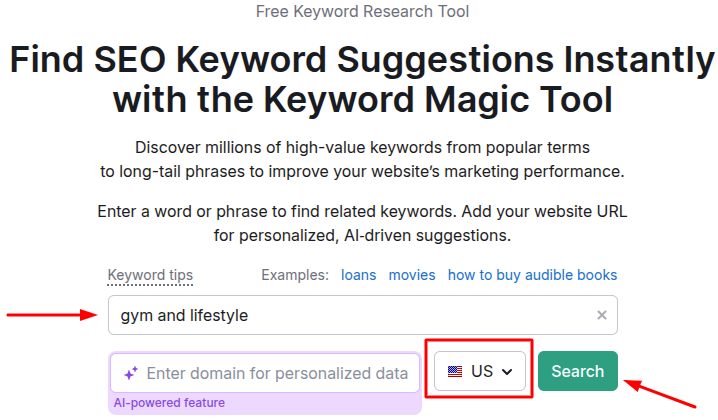

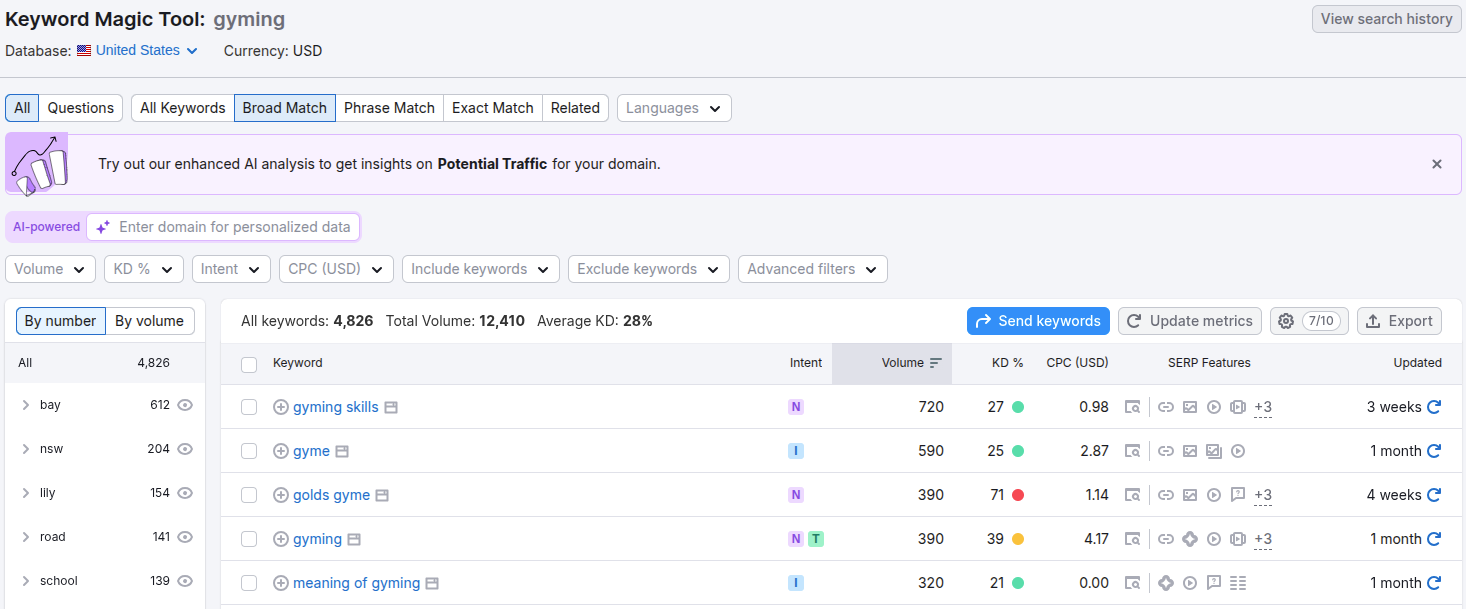

Run a keyword gap analysis

Find queries your competitors rank for that you do not. This feeds your content roadmap directly, for both refresh priorities and new content planning.

Monitor unlinked brand mentions

When you find your brand mentioned online without a link, reach out. In most cases, a polite request to convert a plain-text mention into a hyperlink has a reasonable success rate and costs almost nothing.

You can use the [“Brand Name” -site:YourSite.com] command in the search to see your brand mentions online.

Step 15: Local SEO audit (if applicable)

If you serve customers in a specific geographic area, a local SEO audit is essential.

Google Business Profile

This is your most important local SEO asset. Audit for completeness (every field filled in), accuracy (business name, address, and phone number exactly matching your website), correct primary and secondary categories, and recent activity (posts, photos, review responses).

Reviews and reputation

Positive reviews improve both ranking signals and conversion rates from local results. Monitor and respond to reviews, both positive and negative. Unaddressed negative reviews signal to Google and to potential customers that the business is not actively managed.

LocalBusiness schema

Add the LocalBusiness schema to your website with complete, accurate contact information. It helps Google verify your business details and improves your chances of appearing in the local map pack.

Step 16: Measure, prioritize, and build your action plan

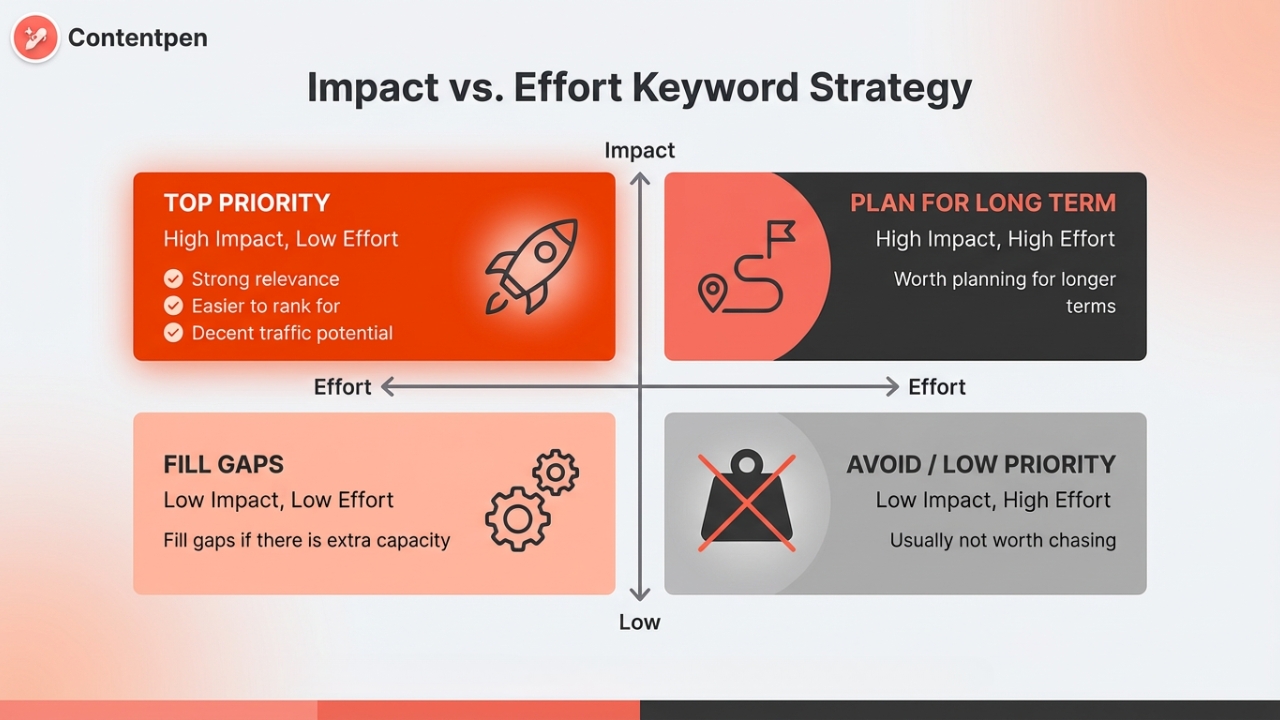

An SEO audit is only worth doing if it produces action. An action plan is only useful if it is prioritized. You cannot fix everything at once, and not everything is worth fixing in the same week.

That is why you need to follow this three-tier framework to organize every issue you find and fix it without overload:

Tier 1: Fix this week (high impact, relatively low effort)

- Indexing errors that are blocking important pages

- Pages accidentally blocked via robots.txt

- Missing or broken canonical tags on high-traffic pages

- Core Web Vitals failures on your top 10 pages

- Critical schema errors

Tier 2: Fix this sprint (high impact, higher effort)

- Content refresh for high-impressions, low-CTR pages

- Content gap fills on core topic pages

- Redirect chain cleanup across the site

- E-E-A-T improvements on key pages (author bios, updated dates)

- Disavow file for confirmed toxic links

Tier 3: Ongoing optimization (incremental, compounding)

- Internal link architecture improvements

- Local directory citation cleanup

- FAQ schema additions to pages

- New backlink acquisition outreach

- AI brand representation monitoring

For each item, log the URL or issue, the fix required, the expected impact, and who owns it. A 10-item prioritized list with owners and due dates will produce more ranking gains than an 80-item spreadsheet that nobody acts on.

Revisit these baseline metrics at 30, 60, and 90 days after implementation to check the results. If the numbers are not moving after 90 days, then your next audit starts there, and the cycle repeats with sharper priorities each time.

How often should you run an SEO audit?

- Every quarter: Standard for most sites under 500 pages.

- Monthly: Content-heavy publications, e-commerce sites, or any site undergoing active changes.

- Weekly monitoring: Checking for indexing errors, crawl errors, and taking manual actions if needed in Google Search Console.

An SEO audit schedule may vary depending on the type of business in question or the SEO professional/consulting agency performing the procedure.

Frequently asked questions

Yes, and a genuinely useful one. Google Search Console, Screaming Frog, PageSpeed Insights, and Contentpen; these tools mainly cover all aspects of SEO audits, and you can do it yourself without any problems.

Yes and no. ChatGPT can help you think through content gaps, suggest title tag improvements, and analyze a piece of content for on-page factors. What it cannot do is crawl your site, pull live data from GSC, or analyze a real backlink profile.

A DIY audit using free tools costs only your time. Professional audits from freelancers can typically range from $100 – $1000, while comprehensive SEO audits from agencies can cost somewhere around $5000 – $30,000+, depending on the website.

A focused audit of a site under 50 pages can be done in 1 day. Sites with 100 to 500 pages typically take 3-4 days. Large sites with complex architectures can take 1 week or more.

Start with Google Search Console for the indexing status of your pages. Run Screaming Frog to crawl your site and surface technical issues. Use PageSpeed Insights for Core Web Vitals. Use the Rich Results Test for schema validation. Use Contentpen for content gap analysis and content refresh.

A site audit typically refers specifically to the technical crawl: broken links, redirect issues, indexing problems, and similar technical health checks. An SEO audit is broader. It includes the technical crawl but also covers content quality, on-page optimization, backlinks, local SEO, and AI visibility.